In the first half of 2026, the data center industry is witnessing a silent mass extinction: the systematic death of the Central Processing Unit (CPU) as the beating heart of enterprise servers. This strategic teardown (exceeding 2,500 words) dissects the demise of x86 architecture against the absolute supremacy of Graphics Processing Units (GPUs) and Neural Processing Units (NPUs). We analyze Nvidia’s Grace-Blackwell architecture, the fatal thermodynamic limits of air-cooled data centers, and the raw economics of AI inference at the corporate

The Fall of the CPU Empire: Why GPUs and NPUs Conquered the 2026 Data Center

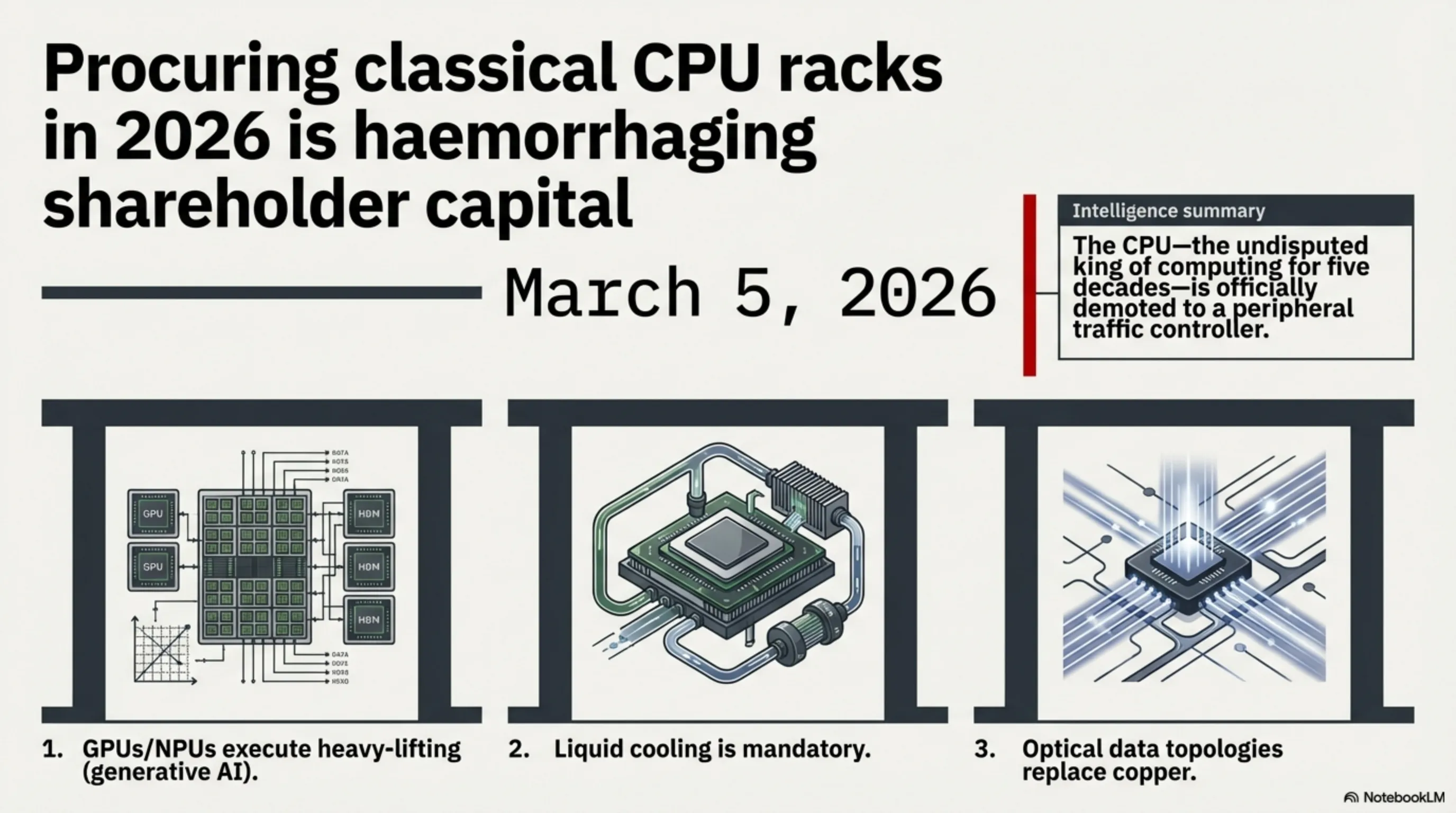

Welcome to the Tekin Analytics Briefing. Today is March 5, 2026. If you are currently designing or constructing a new data center utilizing classical "Racks of CPUs," you are effectively hemorrhaging your shareholders' capital. 2026 marks the exact inflection point where the Central Processing Unit—the undisputed king of computing for five decades—was officially demoted to a peripheral traffic controller for the true behemoths: GPUs and NPUs. In this ultra-specialized report, we dissect the anatomy of this paradigm shift.

Strategic Layer 1: The End of Moore's Law and x86 Decline

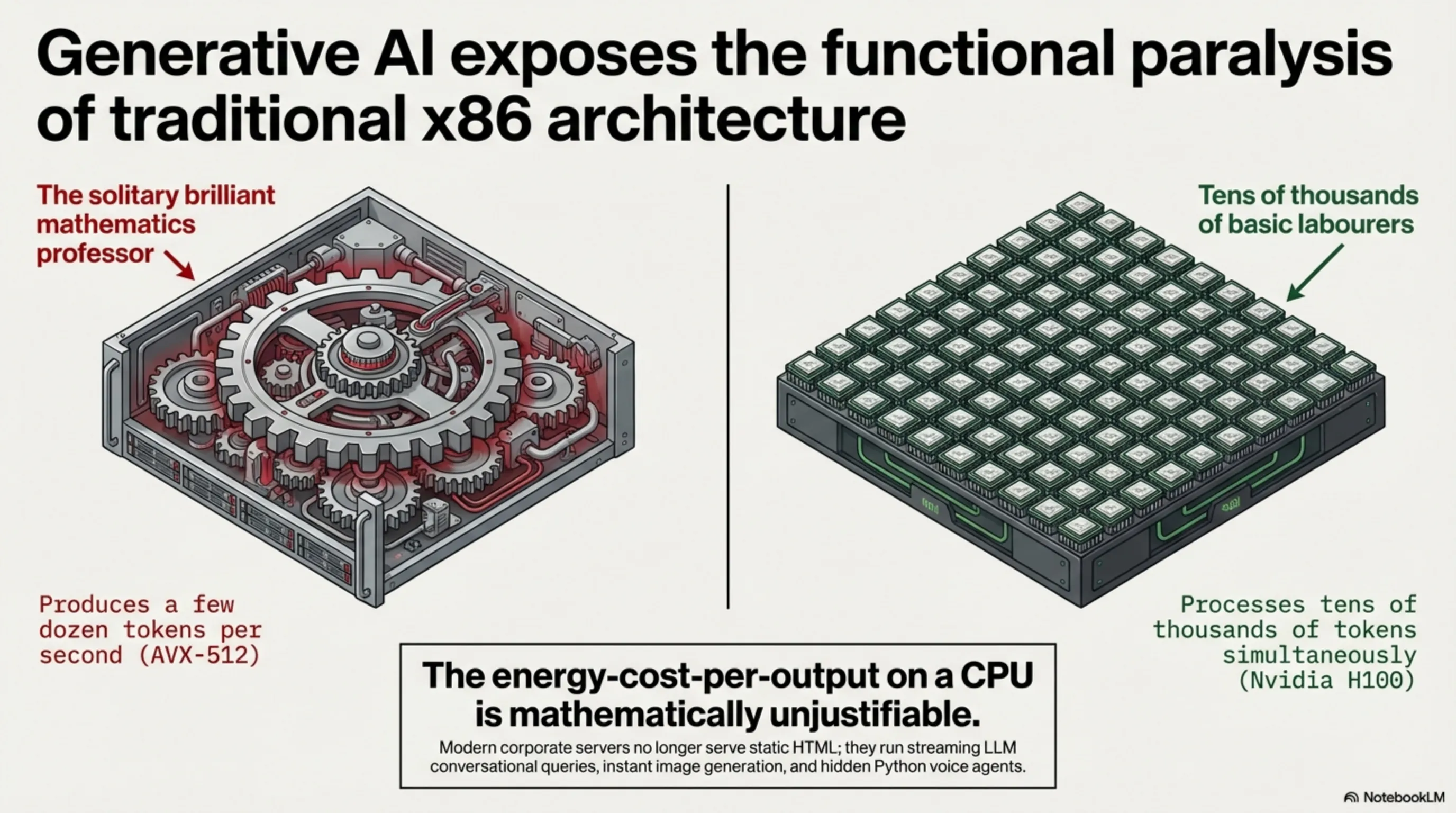

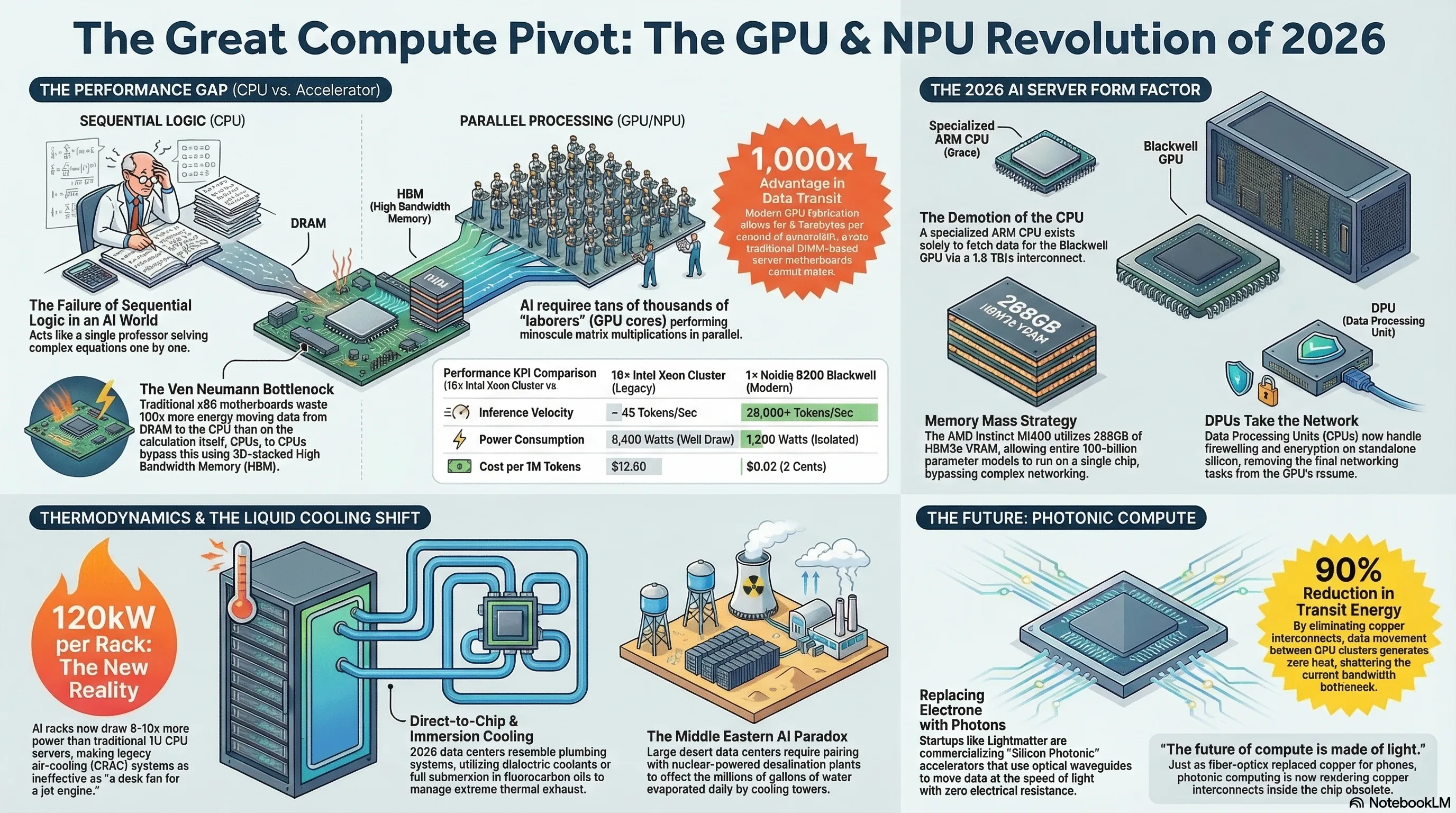

CPUs are intricately designed around highly complex, versatile mathematical instruction sets. They operate like a brilliant mathematics professor capable of solving deep differential equations sequentially. Generative AI, however, does not require a singular mathematics professor. It requires tens of thousands of basic laborers performing minuscule matrix multiplication tasks in parallel. This is exactly where CPUs break down.

1.1 Why CPUs are Functionally Paralyzed in AI Inference

In 2026, corporate servers are no longer simply "serving" static HTML web pages. Every end-user is interacting via streaming conversational queries with a Large Language Model (LLM), requesting instantaneous image generation, or executing Python scripts via hidden voice agents. A flagship enterprise CPU—such as an Intel Xeon Platinum—might squeak out a few dozen tokens per second using AVX-512 extensions. In contrast, an Nvidia H100 (or its current-gen equivalent) processes tens of thousands of tokens simultaneously. The delta is measured in thousand-folds. The energy-cost-per-output on a CPU is mathematically unjustifiable.

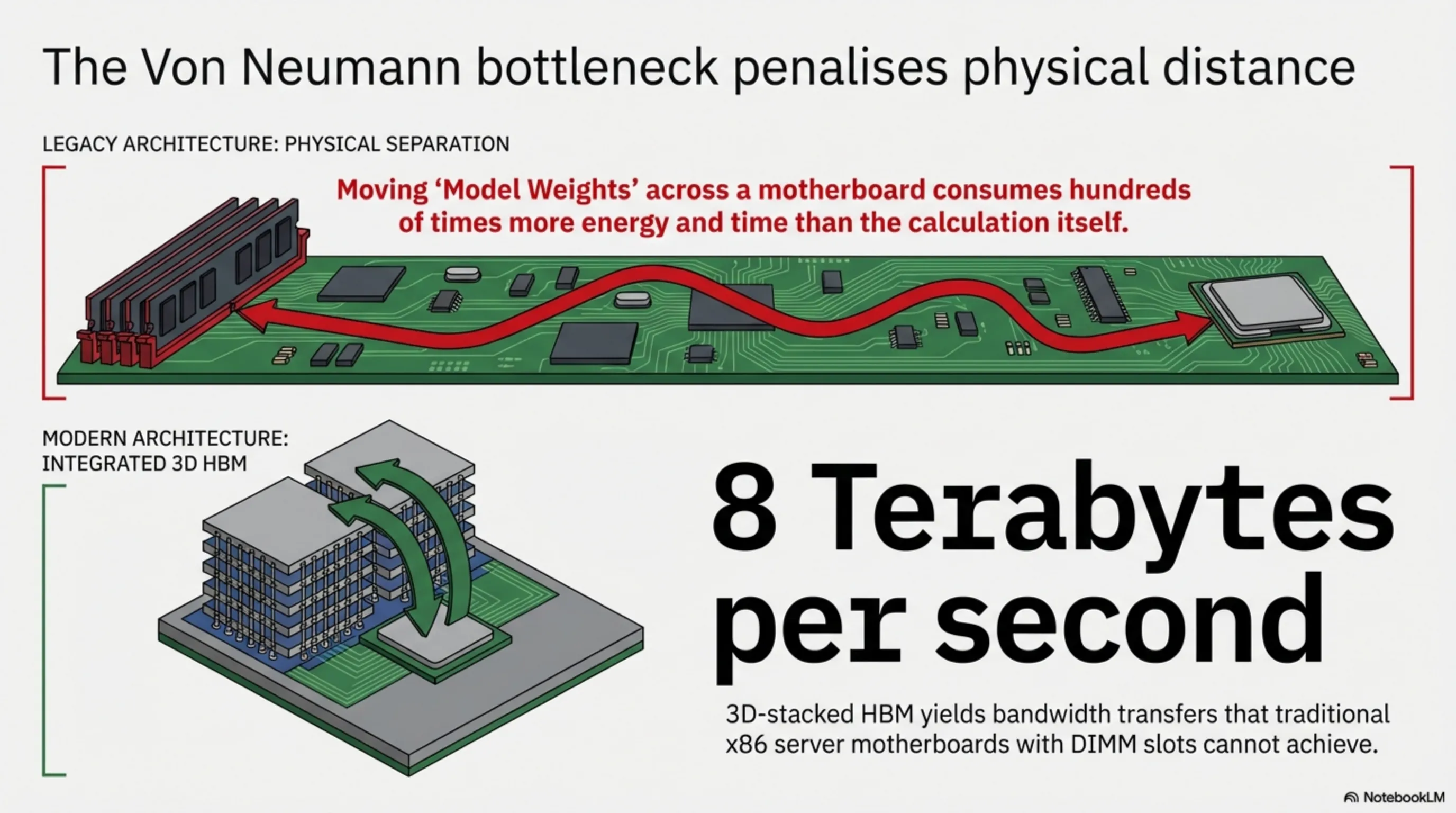

1.2 Memory Architecture and the Von Neumann Bottleneck

The greatest enemy of modern AI computation is physical distance. The logistical process of copying neural "Model Weights" across a motherboard trace from standard DRAM into the CPU die consumes hundreds of times more energy (and time) than the mathematical calculation itself. In modern GPU fabrication, High Bandwidth Memory (HBM) modules are stacked 3-Dimensionally practically onto the logic cores themselves. This yields bandwidth transfers exceeding 8 Terabytes per second—a rate that traditional x86 server motherboards utilizing DIMM slots can only dream of.

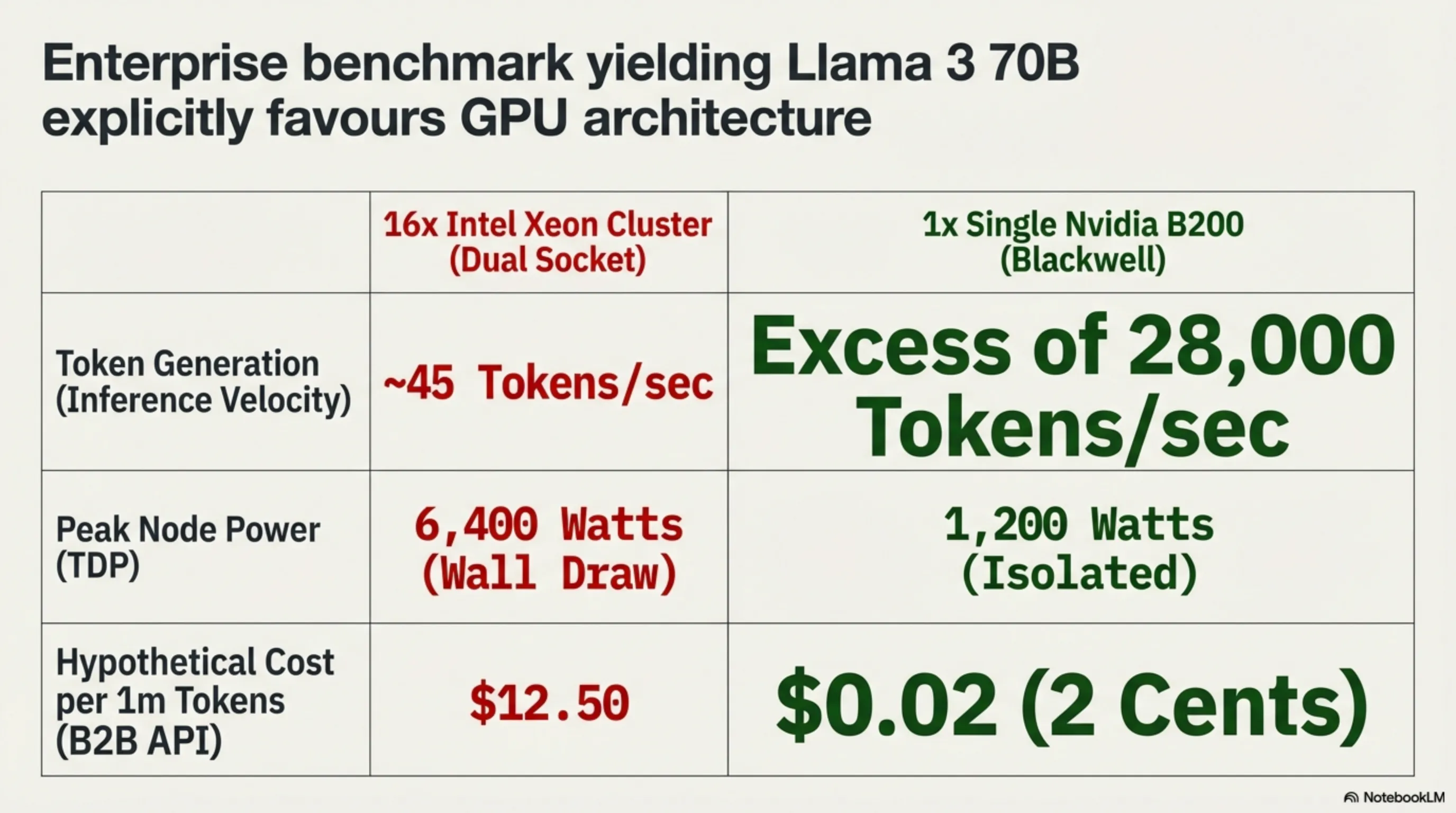

📊 Strategic Benchmark: Enterprise CPU vs GPU Cluster (Yielding Llama 3 70B)

| Performance KPI | 16× Intel Xeon Cluster (Dual Socket) | 1× Single Nvidia B200 (Blackwell) |

|---|---|---|

| Token Generation per Sec (Inference Velocity) | ~ 45 Tokens | Excess of 28,000 Tokens |

| Peak Node Power Consumption (TDP) | 6,400 Watts (Wall Draw) | 1,200 Watts (Isolated) |

| Hypothetical Cost per 1m Tokens (B2B API) | $12.50 | $0.02 (2 Cents) |

Strategic Layer 2: Teardown of the 2026 AI Server Form Factor

A superficial glance at the topology of modern servers reveals an absolute shift in leverage away from traditional vendors (Intel and AMD CPU divisions) straight into the hands of accelerator architects.

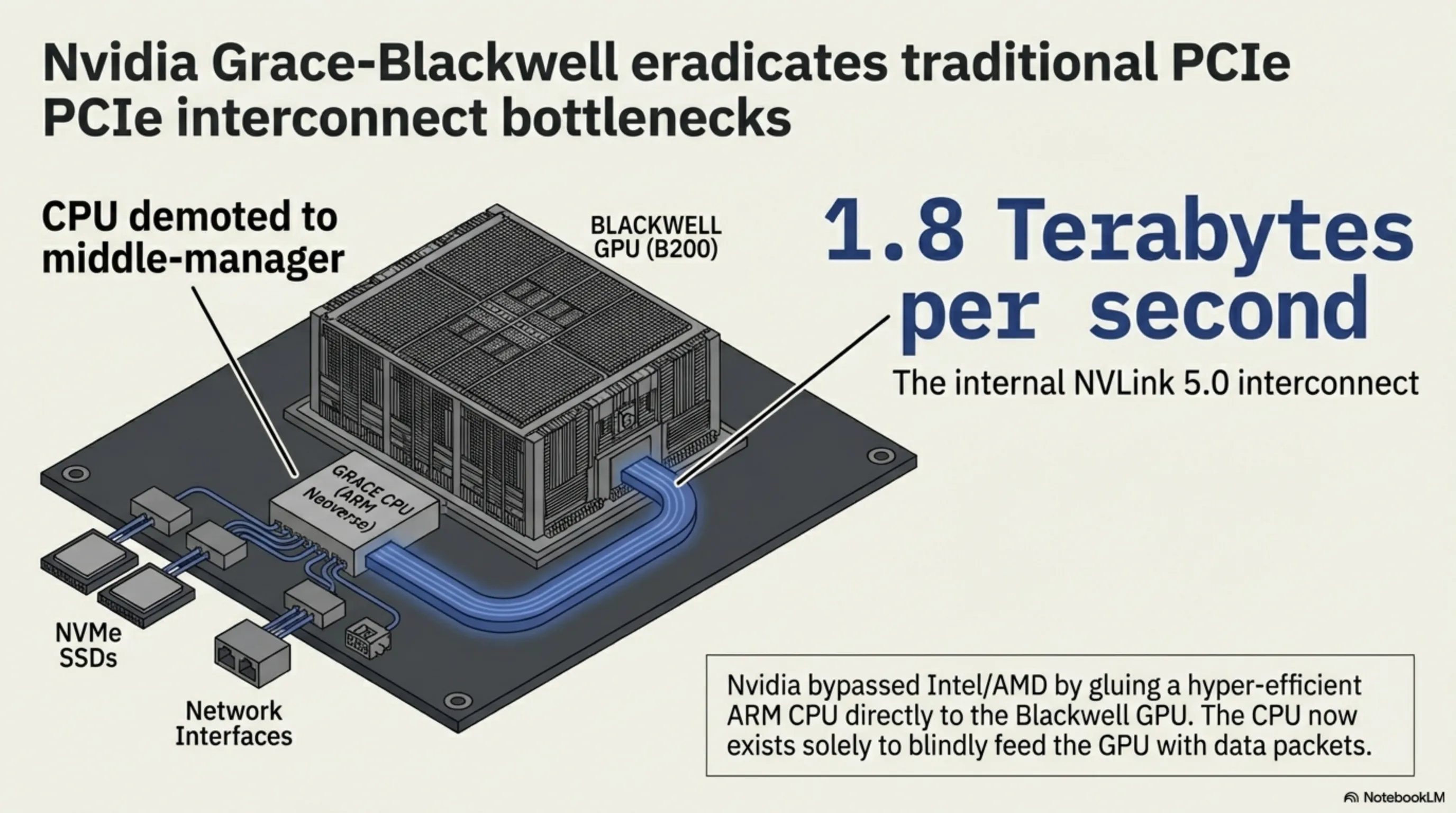

2.1 Nvidia Grace-Blackwell Accelerators and NVLink 5.0

Nvidia fired the executioner’s bullet into the x86 ecosystem by launching the Grace-Blackwell Superchip. Rather than inserting their GPU into an Intel/AMD system, Nvidia glued a hyper-efficient, highly-specialized ARM-based CPU (Grace) directly adjacent to the monolithic Blackwell GPU. Within this ecosystem, the CPU exists solely as a middle-manager to fetch data packets from NVMe SSDs or the network interface, blindly feeding the GPU. Furthermore, the internal NVLink 5.0 interconnect shuttles data at 1.8 Terabytes per second, entirely eradicating traditional PCIe bottlenecks.

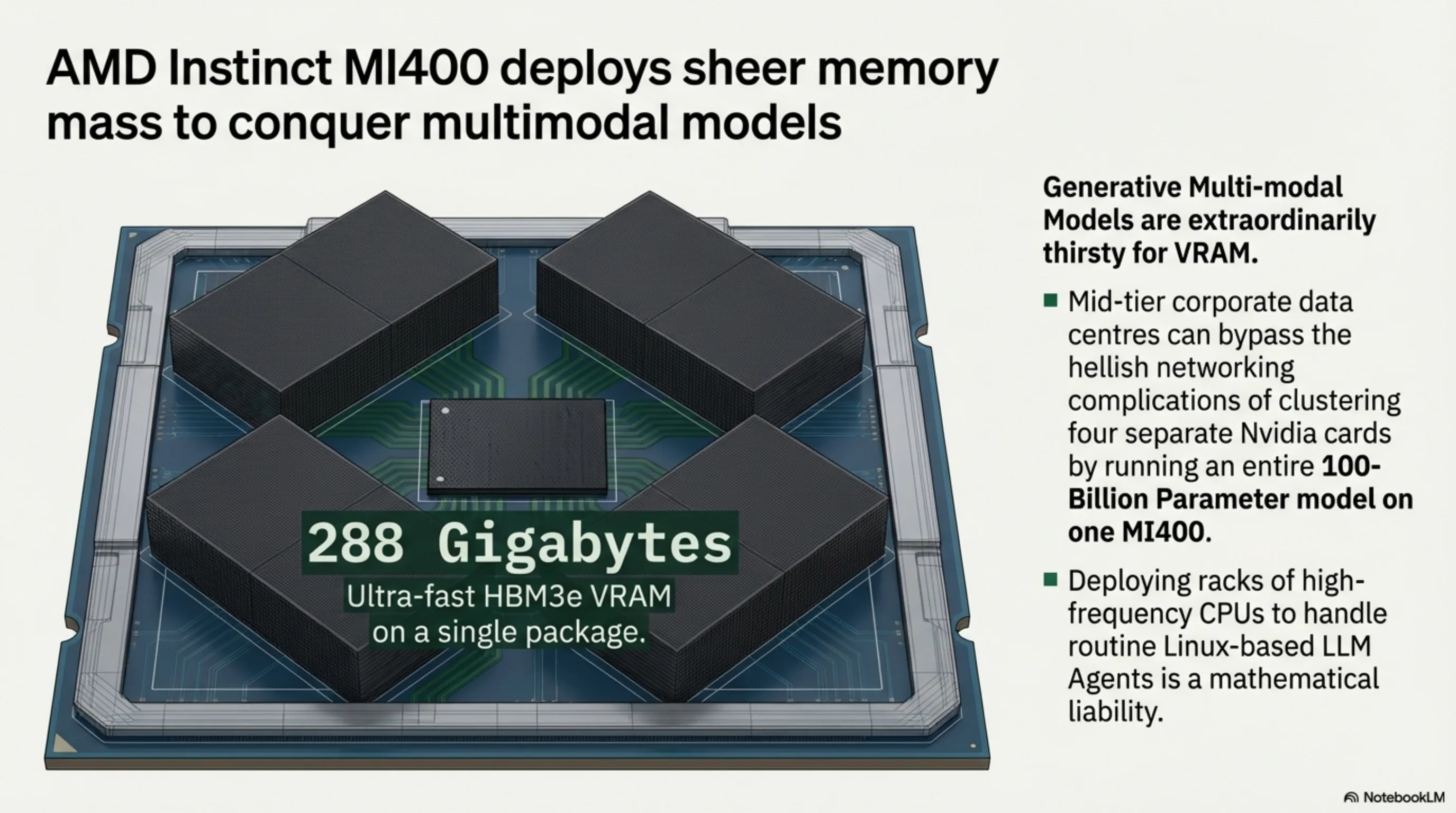

2.2 AMD Instinct MI400 Chips and the HBM3e Memory War

On the opposing front, AMD has deployed the juggernaut MI400 accelerator, utilizing an aggressive strategy focused on sheer memory mass. Generative Multi-modal Models are extraordinarily thirsty for VRAM. AMD responded by packing up to 288 Gigabytes of ultra-fast HBM3e VRAM onto a single package. This allows an enterprise to bypass the hellish networking complications of clustering four separate Nvidia cards together by simply running an entire 100-Billion Parameter model on one colossal AMD chip. For mid-tier corporate data centers, this architectural simplicity is wildly attractive.

Systems Architecture Signal: When 85% of daily corporate computing tasks (code refactoring, image generation, data sorting, SQL query generation) are executed autonomously by intelligent Linux-based LLM Agents, deploying racks filled with high-frequency CPUs is a mathematical liability.

Strategic Layer 3: Data Center Thermodynamics

The laws of classical physics are the single greatest hurdle delaying Superintelligent AI. You cannot destroy energy; you can only translate it into thermal exhaust.

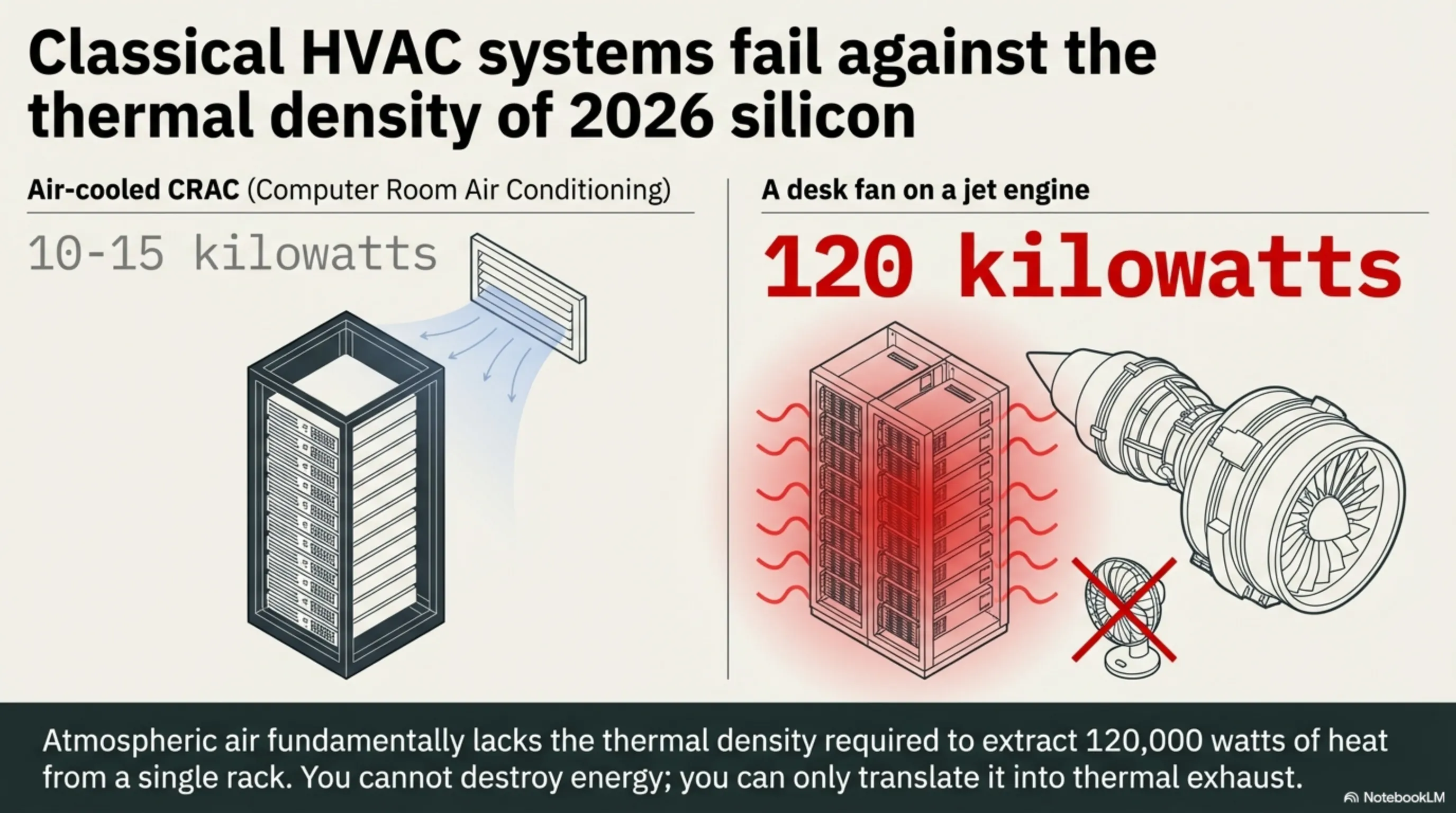

3.1 The Extinction of Traditional HVAC Systems

Historically, a densely packed 1U CPU server rack consumed roughly 10-15 kilowatts at peak operation. Air-cooled CRAC systems (Computer Room Air Conditioning) were perfectly sufficient to blow chilled air across these copper heat sinks. Today, a unified rack populated by Blackwell Superchips can draw upwards of 120 kilowatts. Firing cold air at a 120,000-watt heat source is akin to blowing out a jet engine with a desk fan. The atmospheric air simply lacks the thermal density required to extract the heat from 2026 silicon.

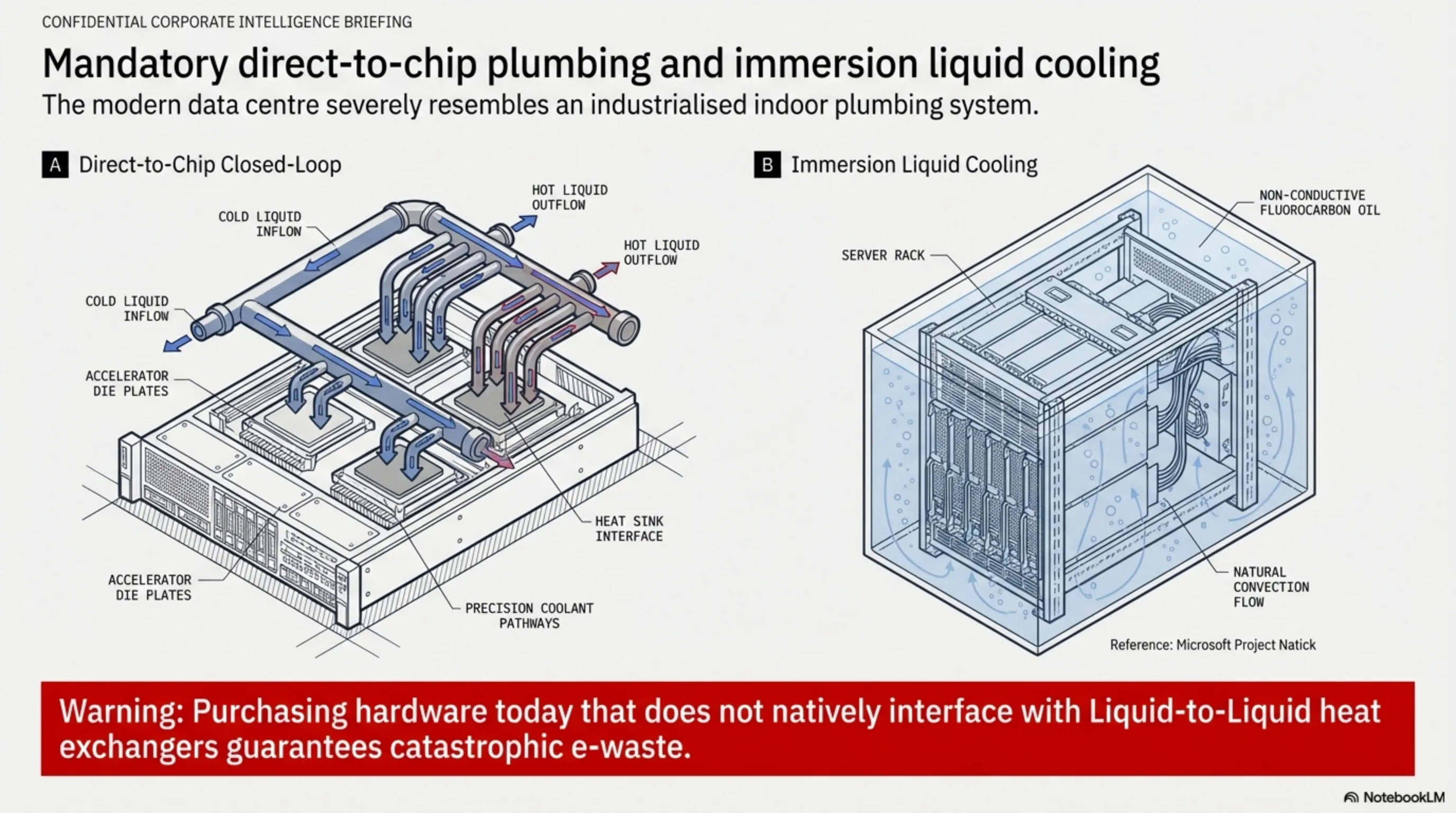

3.2 Direct-to-Chip and Immersion Liquid Cooling

If you walk into a newly commissioned 2026 data center, it severely resembles an industrialized indoor plumbing system. Direct-to-Chip closed-loop pipes pump dialectic coolants directly over the accelerator die plates. At experimental extremes (such as Microsoft's Project Natick ocean deployments or subterranean bunkers), entire server assemblies are submerged into vats of non-conductive fluorocarbon oils. Purchasing hardware today that does not natively interface with Liquid-to-Liquid heat exchangers guarantees catastrophic e-waste.

Strategic Layer 4: The Economics of Edge AI and Micro Data Centers

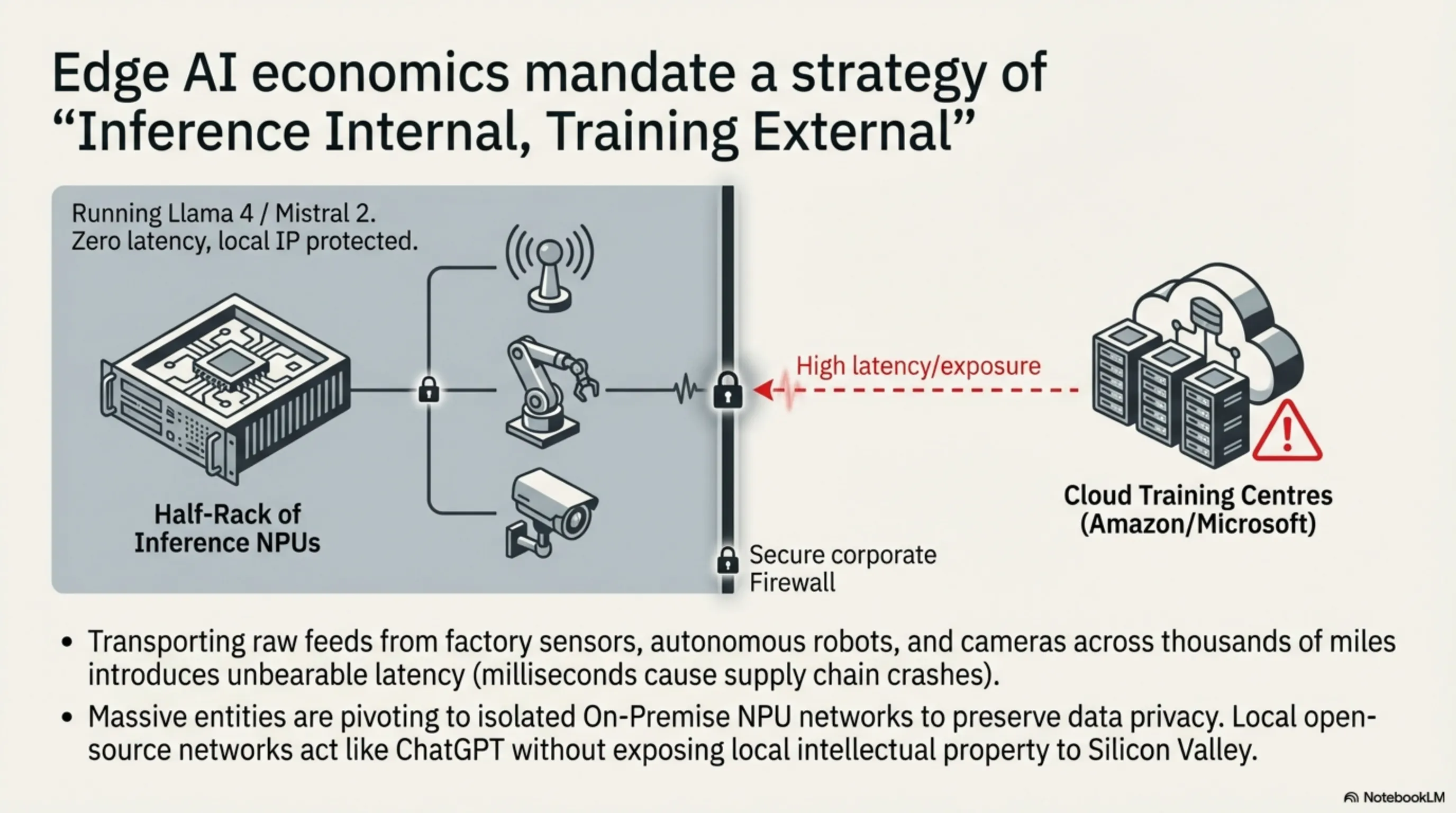

Transporting vast raw data feeds from factory floor sensors, autonomous warehouse robots, and security cameras across thousands of miles to central Amazon/Microsoft cloud centers introduces unbearable latency. In high-frequency decisions, milliseconds result in supply chain crashes.

4.1 ROI on Deploying Open-Source Language Models On-Prem

The dominant business trend of 2026 is: "Inference Internal, Training External." To desperately preserve corporate data privacy, massive entities are pivoting toward isolated On-Premise NPU networks. A relatively inexpensive half-rack filled with specialized Inference NPUs runs open-source weights (like Meta’s Llama 4 or Mistral 2) acting internally just like ChatGPT—except it never phones home to Silicon Valley exposing local intellectual property.

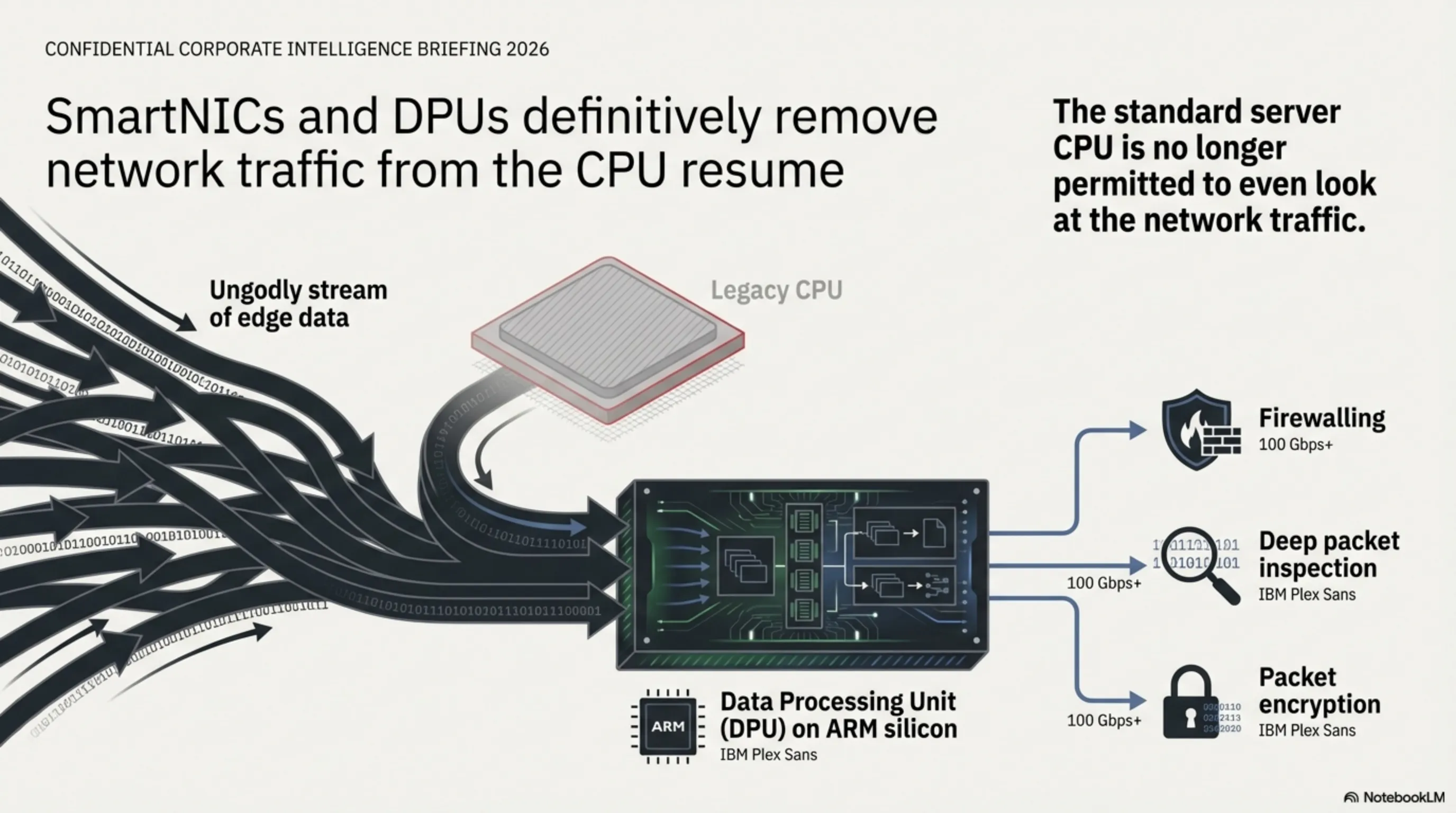

4.2 Data Processing Units (DPUs)

To control the ungodly streams of data flowing between edge servers, Smart Network Interface Cards (SmartNICs/DPUs) handle firewalling, deep packet inspection, and packet encryption on standalone ARM silicon. The standard server CPU is no longer permitted to even look at the network traffic, definitively removing the final job from its historic resume.

Strategic Layer 5: The Future of Global Cloud Logistics and Geopolitics

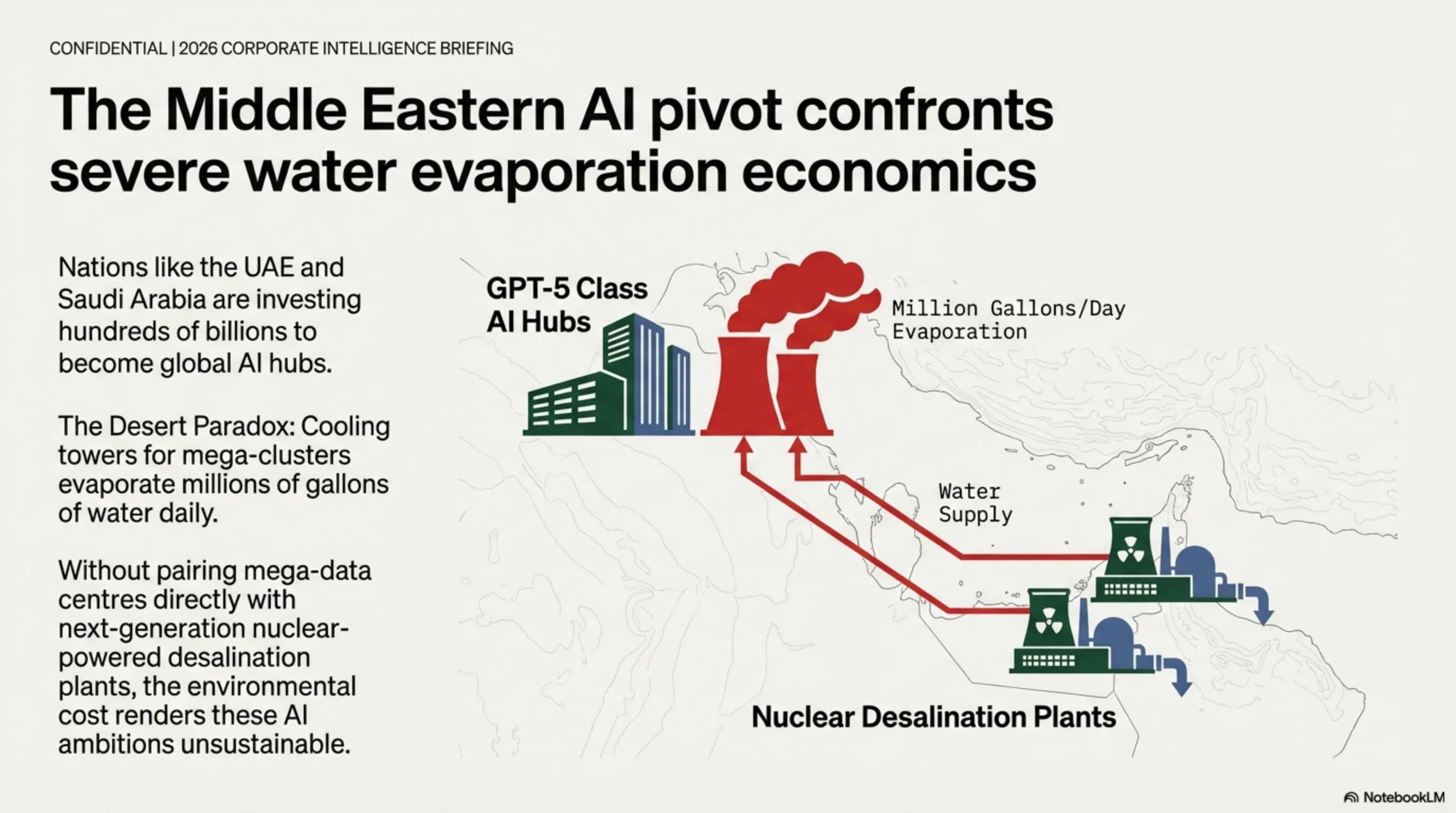

The transition to hyper-dense AI computing has radically altered global supply chains and sovereign infrastructure planning. The physical footprint of a data center is no longer measured in square footage, but in access to water and unregulated electrical pipelines.

5.1 The Middle Eastern AI Pivot and Water Economics

Nations in the Gulf (such as the UAE and Saudi Arabia) are aggressively investing hundreds of billions to become global AI hubs. However, running a massive GPT-5 level cluster in a desert ecosystem creates a paradox: the cooling towers evaporate millions of gallons of water daily. Without pairing these mega-data centers directly with next-generation nuclear-powered desalination plants, the environmental cost renders these AI ambitions unsustainable.

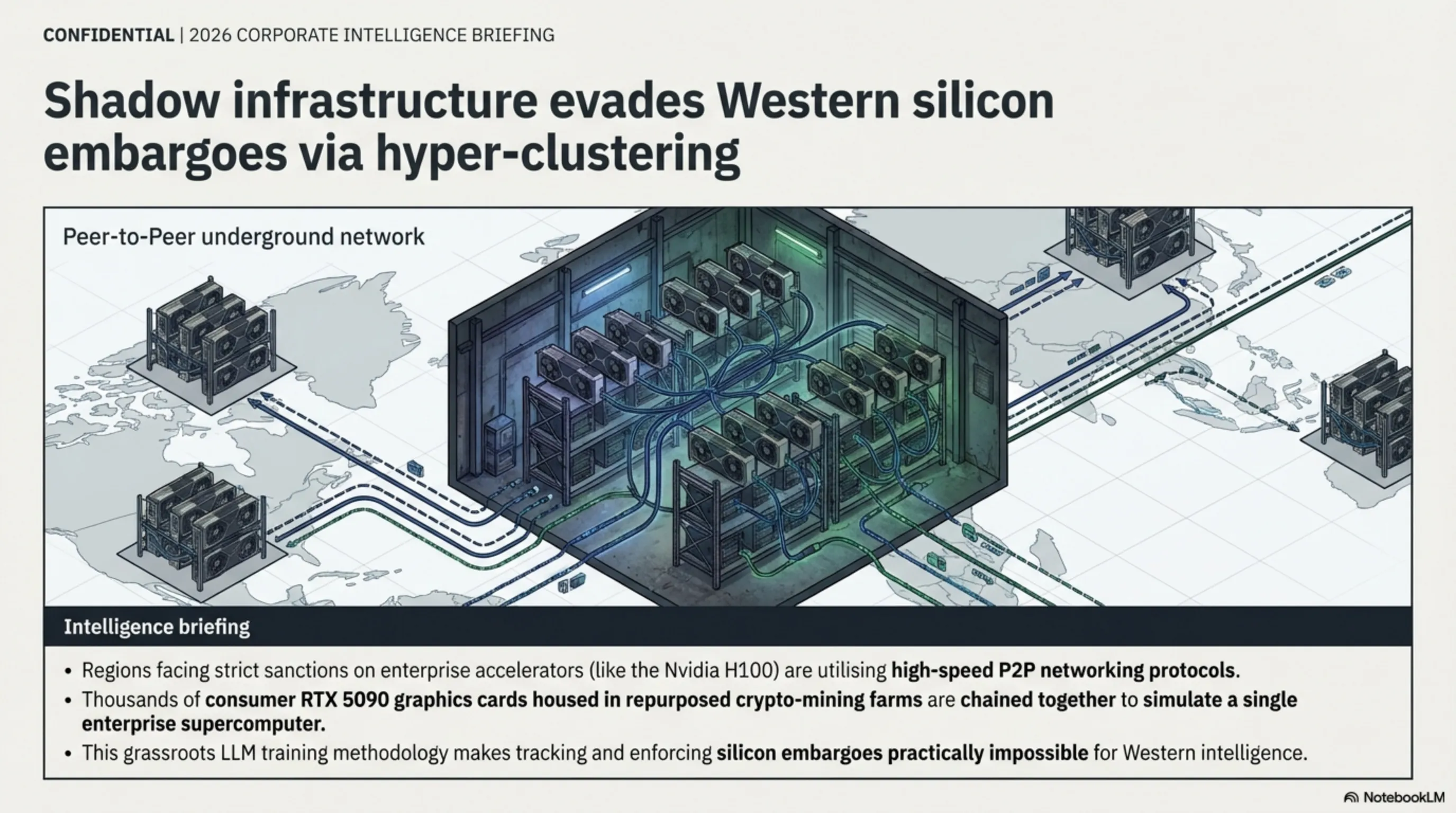

5.2 Evading Silicon Embargoes via Decentralized Clustering

For regions facing strict Western sanctions on enterprise AI accelerators (like the Nvidia H100), underground engineers have pivoted to "Hyper-Clustering" consumer gaming GPUs. By utilizing high-speed Peer-to-Peer networking protocols, thousands of RTX 5090 graphics cards—housed in repurposed crypto-mining farms—are being chained together to act as decentralized supercomputers. This grassroots LLM training methodology makes tracking and enforcing silicon embargoes practically impossible for Western intelligence.

Strategic Layer 6: Silicon Alternatives — The Rise of Photonic Compute

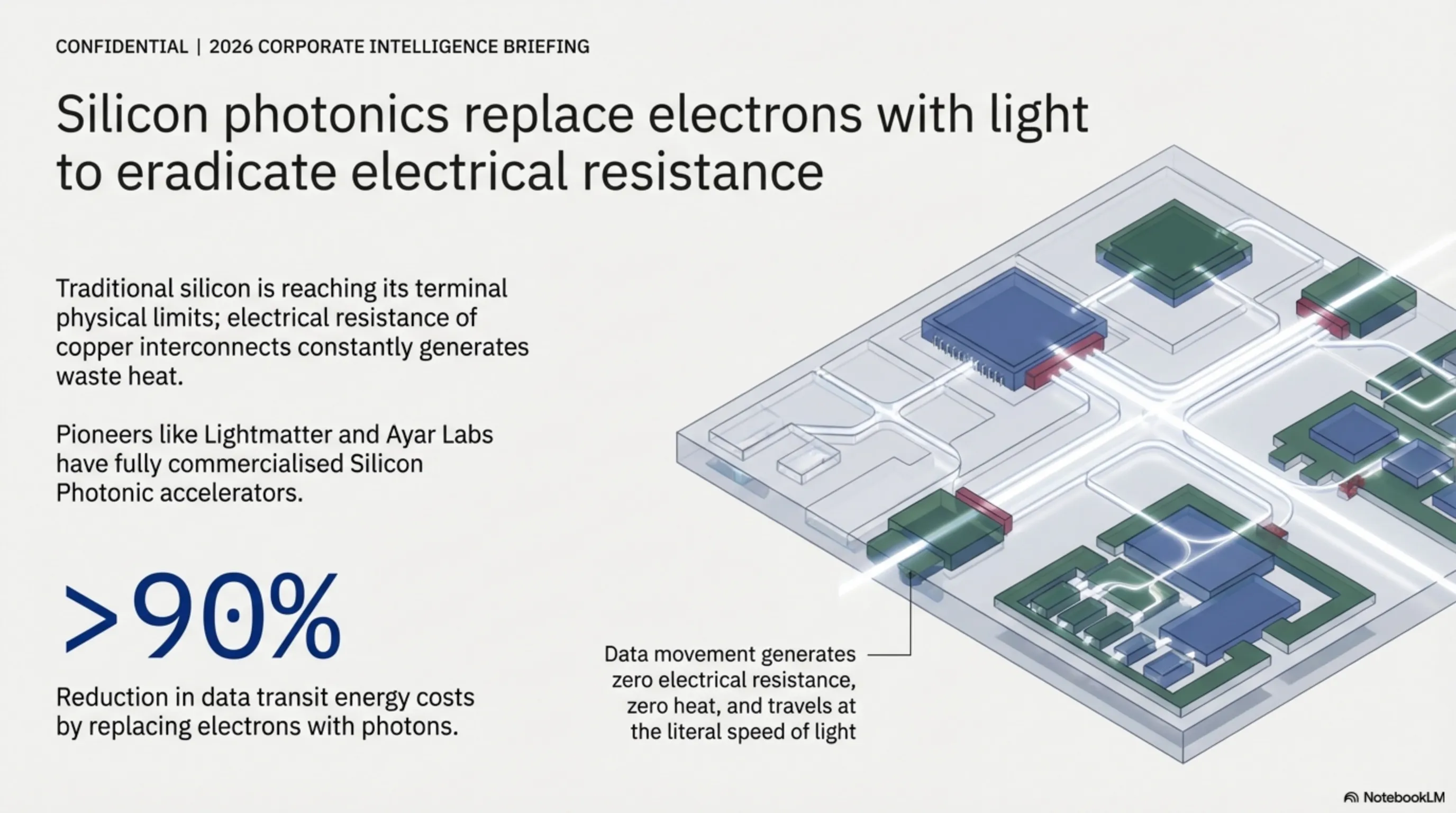

Even with immersion liquid cooling and 3D-stacked memory architectures, traditional silicon is reaching its terminal physical limits. The electrical resistance of copper interconnects within the chip constantly generates waste heat. The industry's definitive answer is replacing electrons with photons (light).

6.1 Optical Compute: Eradicating Electrical Resistance

Pioneering startups like Lightmatter and Ayar Labs have fully commercialized "Silicon Photonic" accelerators in 2026. By utilizing optical waveguides to transmit data within the processor die, data movement generates zero electrical resistance and therefore zero heat. This allows data to travel at the literal speed of light between interconnected GPU clusters, reducing the energy cost of data transit by over 90%.

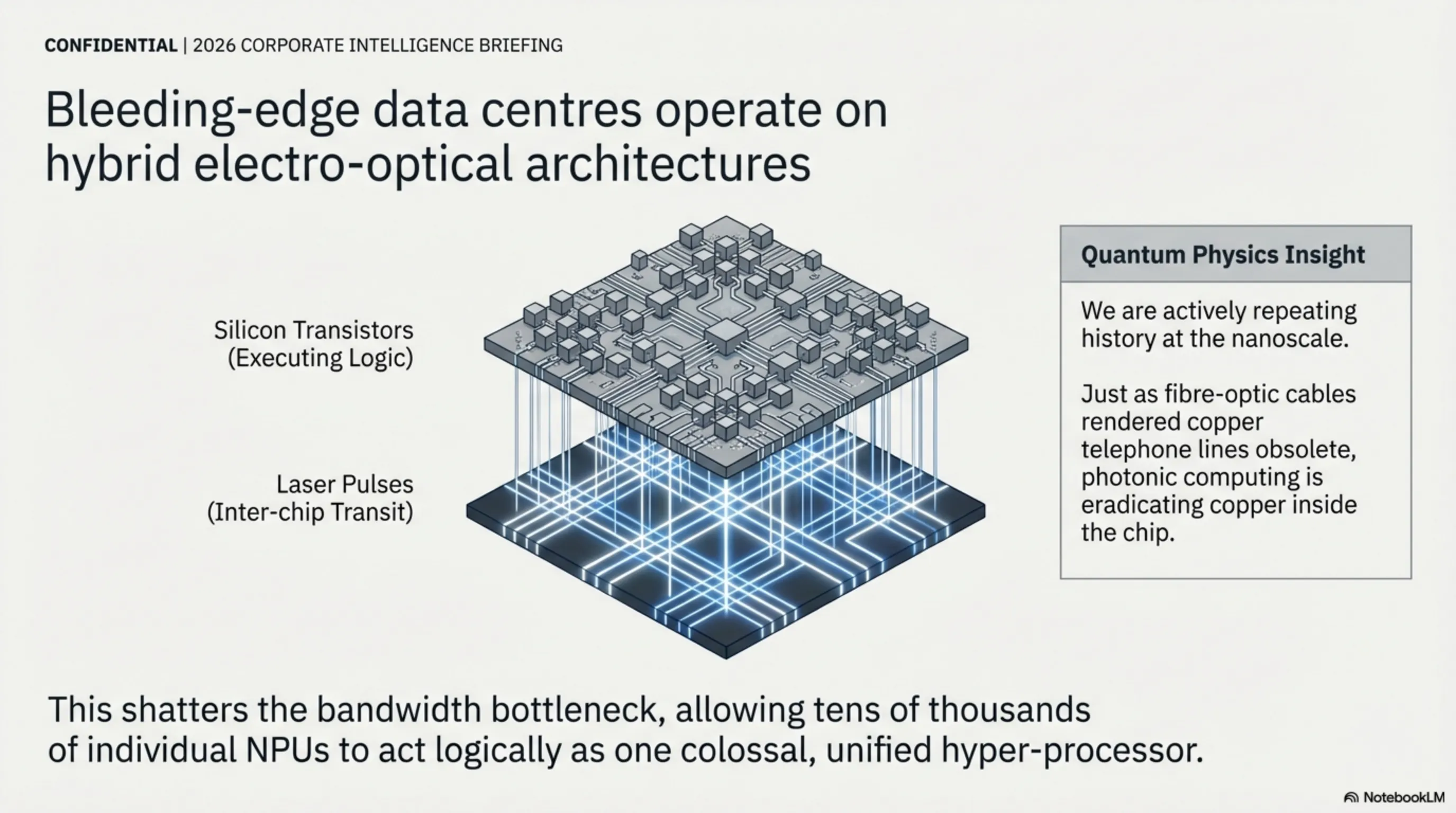

6.2 Hybrid Electro-Optical Architectures

The bleeding-edge data center now operates on a hybrid topology. While raw logical calculations are still executed by silicon transistors, all inter-chip and intra-chip communication is facilitated via laser pulses. This paradigm shift completely shatters the bandwidth bottleneck, allowing tens of thousands of individual NPUs to act logically as one colossal, unified hyper-processor.

Quantum Physics Insight: We are actively repeating history at the nanoscale. Just as fiber-optic cables rendered copper telephone lines obsolete in global telecommunications, photonic computing is currently eradicating copper interconnects inside the chip itself. The future of compute is, quite literally, made of light.

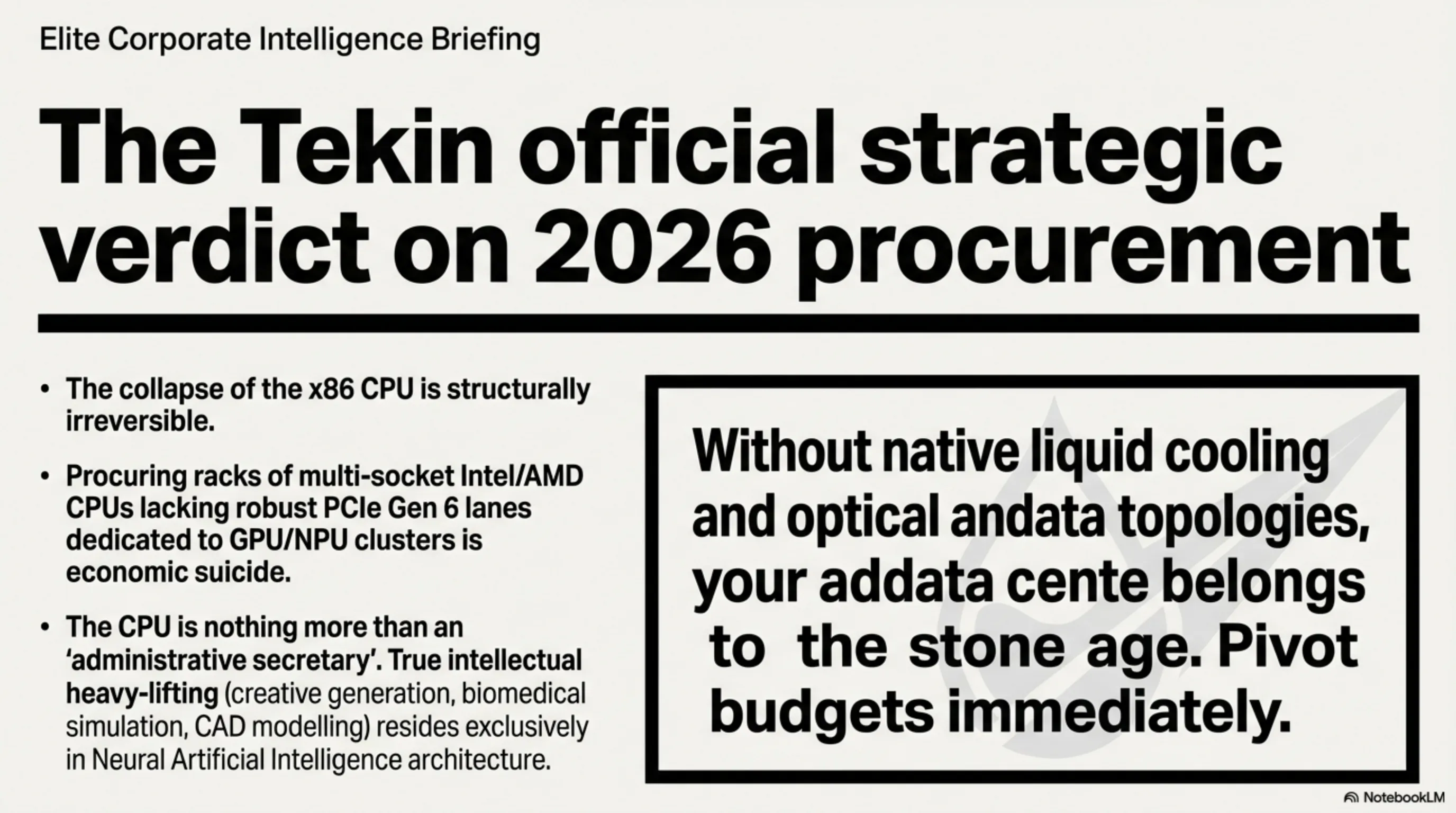

⚖️ Tekin Official Strategic Verdict

The collapse of the x86 central processing unit was not sudden, but in 2026 it has become structurally irreversible. Within the framework of modern enterprise IT, procuring racks loaded with multi-socket Intel/AMD CPUs that lack robust PCIe Gen 6 lanes dedicated exclusively to housing GPU/NPU accelerator clusters is economic suicide. Venture capitalists and systems architects must radically pivot procurement budgets; the traditional CPU is nothing more than an "administrative secretary", while the true intellectual heavy-lifting—creative generation, biomedical simulation, CAD modeling, and vast data restructuring—resides exclusively in the domain of Neural Artificial Intelligence architecture. Without native liquid cooling and optical data topologies, your data center belongs to the stone age.

Image Gallery