A technological earthquake on March 11, 2026! This episode of "Tekin Night" dissects 6 critical events: the launch of GPT-5.4 with a staggering 1-million-token context and Computer Use capabilities, Google's introduction of Gemini Embedding 2 for seamless multi-modal search, the arrival of autonomous AI in Office with Copilot Cowork, a game-changer in design with Adobe AI Assistant, OpenAI's strategic acquisition of Promptfoo for AI security, and finally, a crisis in Cupertino due to the disastrous delay of the new Siri in iPhone 18 Pro caused

Good evening, Tekin Legion! From GPT-5.4's 1 million token context to OpenAI's Promptfoo acquisition, from Google's Gemini Embedding 2 multimodal to Apple's iPhone 18 Pro Siri delays — your March 11, 2026 technology night is ready.

GPT-5.4: When 1 Million Token Context Becomes Reality

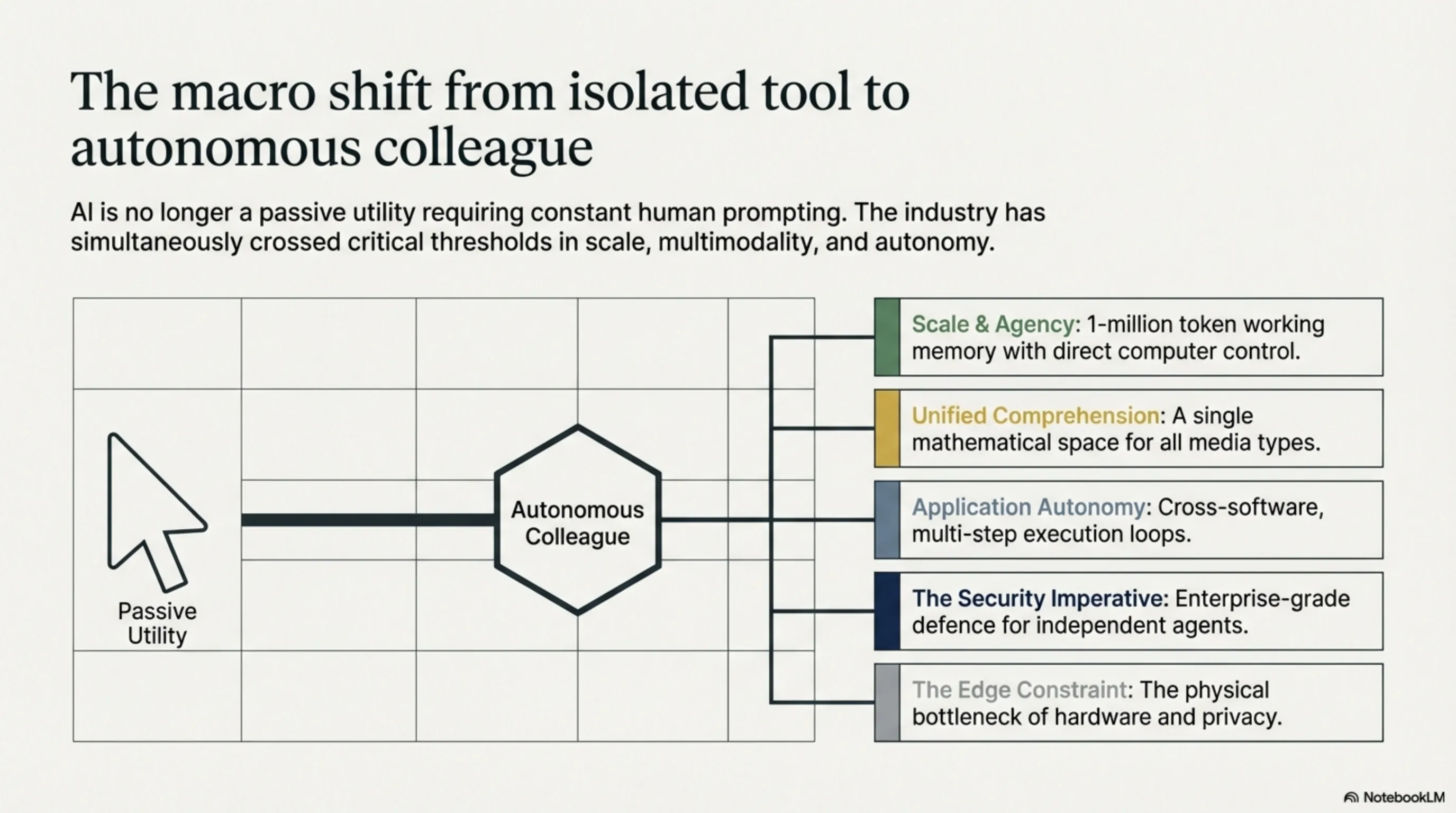

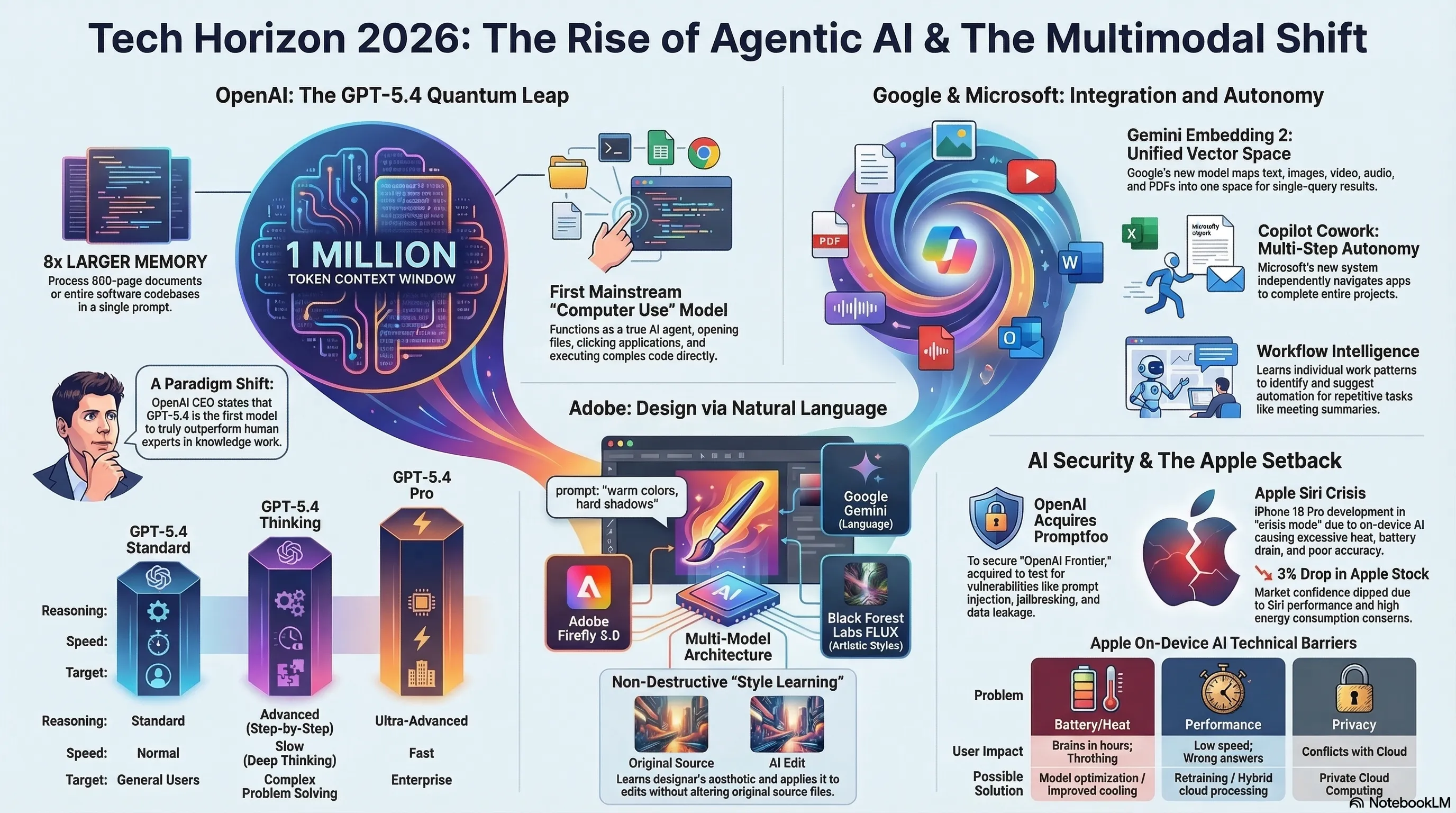

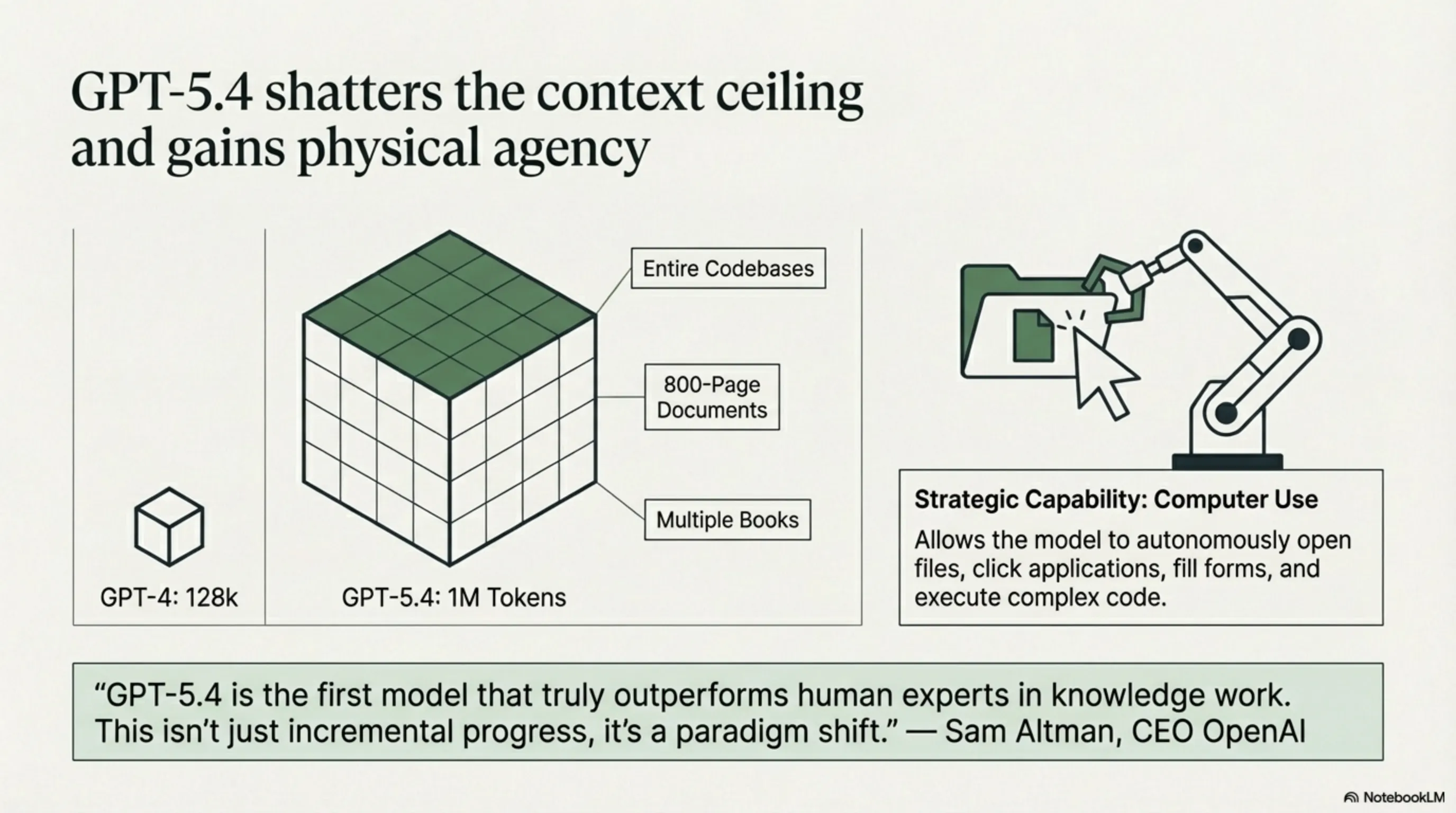

On March 5, 2026, OpenAI released one of the most significant updates in AI history: GPT-5.4 — the first mainstream model with a 1 million token context window and complete agentic capabilities. This isn't just a regular update; it's a quantum leap that could transform the entire AI industry. Imagine being able to feed GPT the entire "War and Peace" novel and ask questions about it — now that's possible.

What does 1 million tokens mean? For comparison, GPT-4 only had a 128,000 token context — meaning GPT-5.4 can hold approximately 8 times more information in memory. This means you can simultaneously analyze 800-page documents, complete software project codebases, or even multiple books. For companies working with big data, this is a game-changer.

But GPT-5.4 isn't just about a large context window. This model is OpenAI's first with "Computer Use" capability — meaning it can directly interact with your computer. It can open files, click in applications, fill forms, and even execute complex code. This means GPT-5.4 is no longer just a chatbot — it's a real AI agent that can perform practical tasks.

"GPT-5.4 is the first model that truly outperforms human experts in knowledge work. This isn't just incremental progress, it's a paradigm shift." — Sam Altman, CEO OpenAI

OpenAI has released three versions of GPT-5.4. Standard GPT-5.4 for regular users, GPT-5.4 Thinking which has advanced reasoning capabilities and can solve complex problems step-by-step, and GPT-5.4 Pro designed for enterprise with higher speed and accuracy. Each version is optimized for specific needs.

| Feature | GPT-5.4 | GPT-5.4 Thinking | GPT-5.4 Pro |

|---|---|---|---|

| Context Window | 1M tokens | 1M tokens | 1M tokens |

| Computer Use | Yes | Yes | Yes |

| Reasoning | Standard | Advanced | Ultra-Advanced |

| Speed | Normal | Slow (Deep Thinking) | Fast |

| Target | General Users | Complex Problems | Enterprise |

GPT-5.4's market impact has been immediate. OpenAI shares (still private) have increased 25% in secondary markets. Competitors like Anthropic and Google are under pressure — Claude 4.6 and Gemini 3.1 Pro still have smaller context windows. But more importantly, GPT-5.4 shows that AI is transforming from tool to collaborator. As we saw in the Agentic AI Revolution article, the future of AI lies in autonomous agents — and GPT-5.4 is a major step in that direction.

📊 Summary: GPT-5.4

🚀 Context: 1 million tokens (8x more than GPT-4)

🖥️ Computer Use: Direct computer control

🤖 Agent Mode: Multi-step autonomous tasks

⚡ Three Versions: Standard, Thinking, Pro

📈 Benchmark: Outperforms human experts

Google Gemini Embedding 2: When Everything Fits in One Space

Google today introduced one of the most important advances in AI: Gemini Embedding 2 — the first truly multimodal embedding model that can map text, images, video, audio, and PDFs into a single unified vector space. What does this mean? Imagine being able to search simultaneously across texts, photos, videos, and audio files with one query — and find relevant results from all types. That's exactly what Gemini Embedding 2 does.

Until now, most embedding models worked with only one type of data. Text embedding models only understood text, image embedding models only images, and so on. But Gemini Embedding 2 combines all of these into a single model. This means you can show a picture of a cat and the model will find texts talking about cats, videos featuring cats, and even audio files with cat sounds.

The technology behind this model is complex. Google uses a "unified transformer" architecture that can convert different types of data into a shared representation. This means a photo, text, and video with similar concepts will be placed close to each other in vector space. For example, a photo of a dog, text "brown dog in park," and video of a dog running in a park will all be close together.

"Gemini Embedding 2 shows that the future of AI is in truly understanding content, not just processing it. When a model can understand the connection between a photo and a poem, we're getting closer to real AI." — Sundar Pichai, CEO Google

The practical applications of this technology are endless. Companies can search across all their documents, photos, videos, and audio files — with a single query. For example, an automotive company could say "all content related to brake problems" and the model would find technical texts, parts photos, training videos, and even audio files from meetings. Or a hospital could find all information related to a specific disease — from medical texts to radiology images to surgical videos — in one place.

For developers, Gemini Embedding 2 is available through Gemini API and Vertex AI. Google says the model is currently in public preview and will be fully released by the end of March 2026. Pricing is based on the number of tokens processed — similar to language models, but for different types of content.

📊 Summary: Gemini Embedding 2

🌐 Multimodal: Text + Images + Video + Audio + PDF

🔍 Cross-Modal: Search across content types

🏗️ Unified Space: Single shared vector space

🔧 API: Gemini API + Vertex AI

📅 Status: Public Preview (GA March 2026)

Microsoft Copilot Cowork: When AI Becomes a Real Colleague

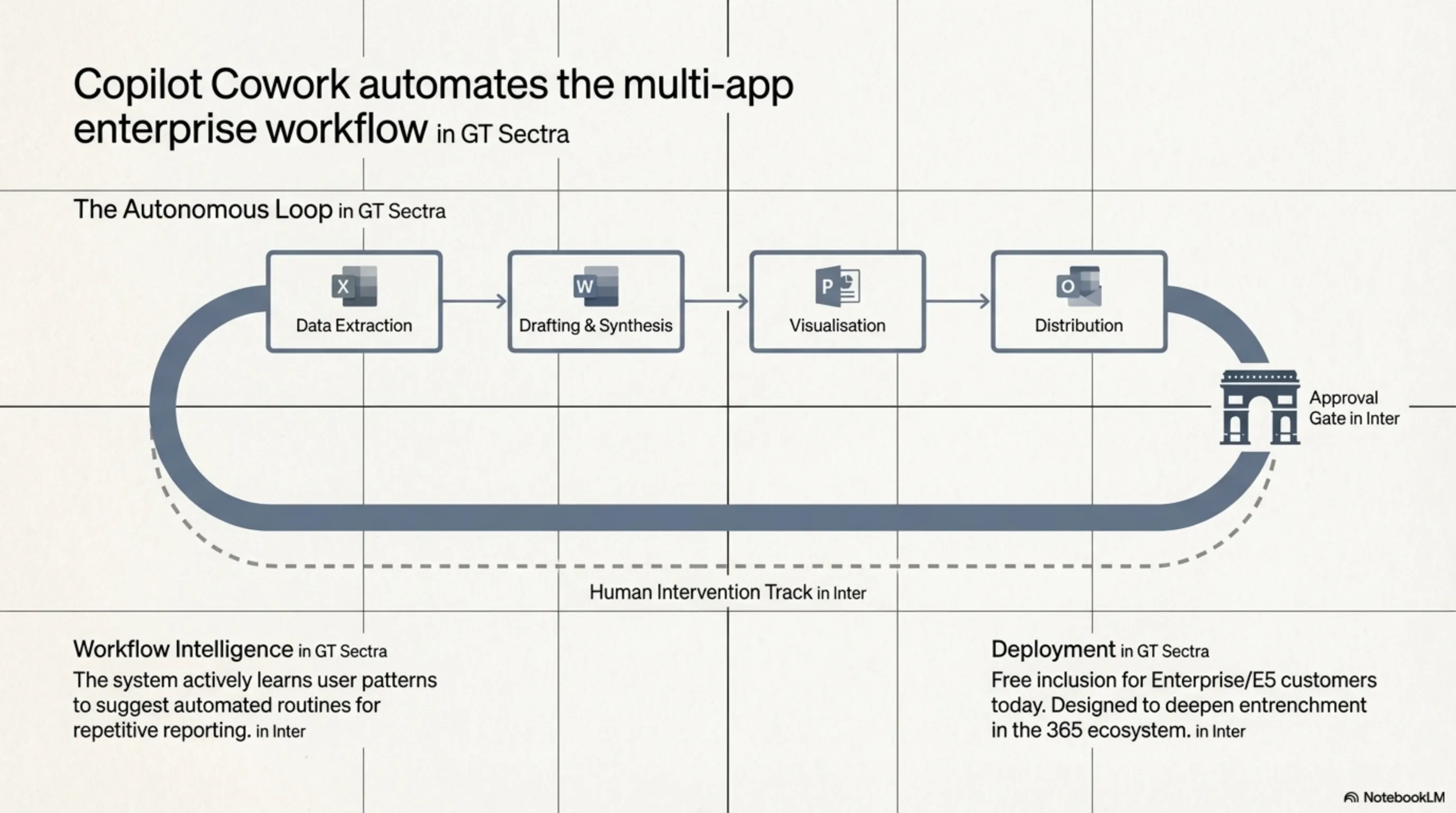

Microsoft today introduced a revolutionary new capability called Copilot Cowork that can perform multi-step tasks completely autonomously. This is no longer just an AI assistant that answers questions; it's a real colleague that can manage complex projects from start to finish. Imagine telling Copilot "prepare a complete quarterly sales report" and hours later having the complete report on your desk — without you doing anything.

How does Copilot Cowork work? This system can move between different Microsoft 365 applications and automatically perform complex tasks. For example, to prepare a sales report, it first goes to Excel and collects sales data, then creates charts and analytical tables, then goes to Word and writes the text report, then goes to PowerPoint and creates a presentation, and finally emails the report to managers through Outlook. All of this without human intervention.

One of Copilot Cowork's interesting features is "Workflow Intelligence." This system learns from your work patterns and can identify repetitive tasks. For example, if you prepare a sales report every week, Copilot notices and suggests automating this task. Or if you always email a summary to the team after meetings, Copilot can automate this too.

"Copilot Cowork shows that the future of work is in human-AI collaboration, not replacement. AI handles repetitive tasks so humans can focus on creative work." — Satya Nadella, CEO Microsoft

Security and control are also considered. Copilot Cowork cannot perform sensitive tasks like deleting files, changing permissions, or sending important emails without approval. The system has an "approval workflow" that asks for your permission for important tasks. Additionally, all Copilot activities are logged so you can see what it has done.

| Capability | Previous Copilot | Copilot Cowork |

|---|---|---|

| Task Type | Single-step | Multi-step |

| Cross-App | Limited | Complete |

| Learning | No | Workflow Intelligence |

| Automation | Manual | Fully Automatic |

| Security Control | Basic | Advanced + Approval |

Copilot Cowork is available today for Enterprise and E5 customers and will reach all Business Premium customers by the end of March. Microsoft says it won't charge extra for this capability — it's part of the existing Copilot subscription. This is a smart move because Microsoft wants to encourage users to use more of the 365 ecosystem.

📊 Summary: Copilot Cowork

🤖 Autonomous: Multi-step automatic tasks

🔄 Cross-App: Works across all Office apps

🧠 Learning: Learns from work patterns

🔒 Security: Approval workflow + logging

📅 Access: Enterprise now, Business March

Adobe Photoshop AI Assistant: When Design Becomes Natural Language

Adobe today introduced one of the most revolutionary Photoshop updates: AI Assistant — an intelligent assistant that can perform complex design tasks with just natural language commands. No more struggling with Photoshop's complex tools for hours; just say what you want and AI Assistant does it for you. This means professional design is accessible to everyone, not just experts.

How does AI Assistant work? You can give commands like "change this photo's background to a mountain landscape," "make this person look younger," or "convert this photo's style to watercolor painting." AI Assistant not only performs these tasks but creates the result non-destructively — meaning the original photo remains untouched and you can edit or remove changes.

The technology behind AI Assistant combines Firefly 5.0 (Adobe's image generation model) with third-party models. Adobe has partnered with Google and integrated Gemini for natural language understanding. They've also partnered with Black Forest Labs (creators of FLUX) to provide more diverse artistic styles. This means AI Assistant understands not just simple commands, but complex and creative requests too.

"We don't want to replace designers. We want to empower them. AI Assistant is like a professional assistant that does whatever you ask." — Shantanu Narayen, CEO Adobe

One of AI Assistant's most interesting features is "Style Learning." This system can learn from your previous work and recognize your personal style. For example, if you always use warm colors, AI Assistant will do the same. Or if you prefer hard shadows, AI Assistant considers this. This means AI results feel like "you," not like a robot.

For professional designers, AI Assistant is a game-changer. Tasks that previously took hours now take minutes. For example, complex masking, color grading, retouching, and even compositing multiple images. This means designers can focus on creative ideas, not technical tasks. And for beginners, this means they can do professional work without spending years learning Photoshop.

📊 Summary: Adobe AI Assistant

🗣️ Natural Language: Control with natural language

🎨 Multi-Model: Firefly 5.0 + Gemini + FLUX

🔄 Non-Destructive: Preserves original file

🧠 Style Learning: Learns personal style

⚡ Speed: Hours of work in minutes

OpenAI Acquires Promptfoo: When AI Security Gets Serious

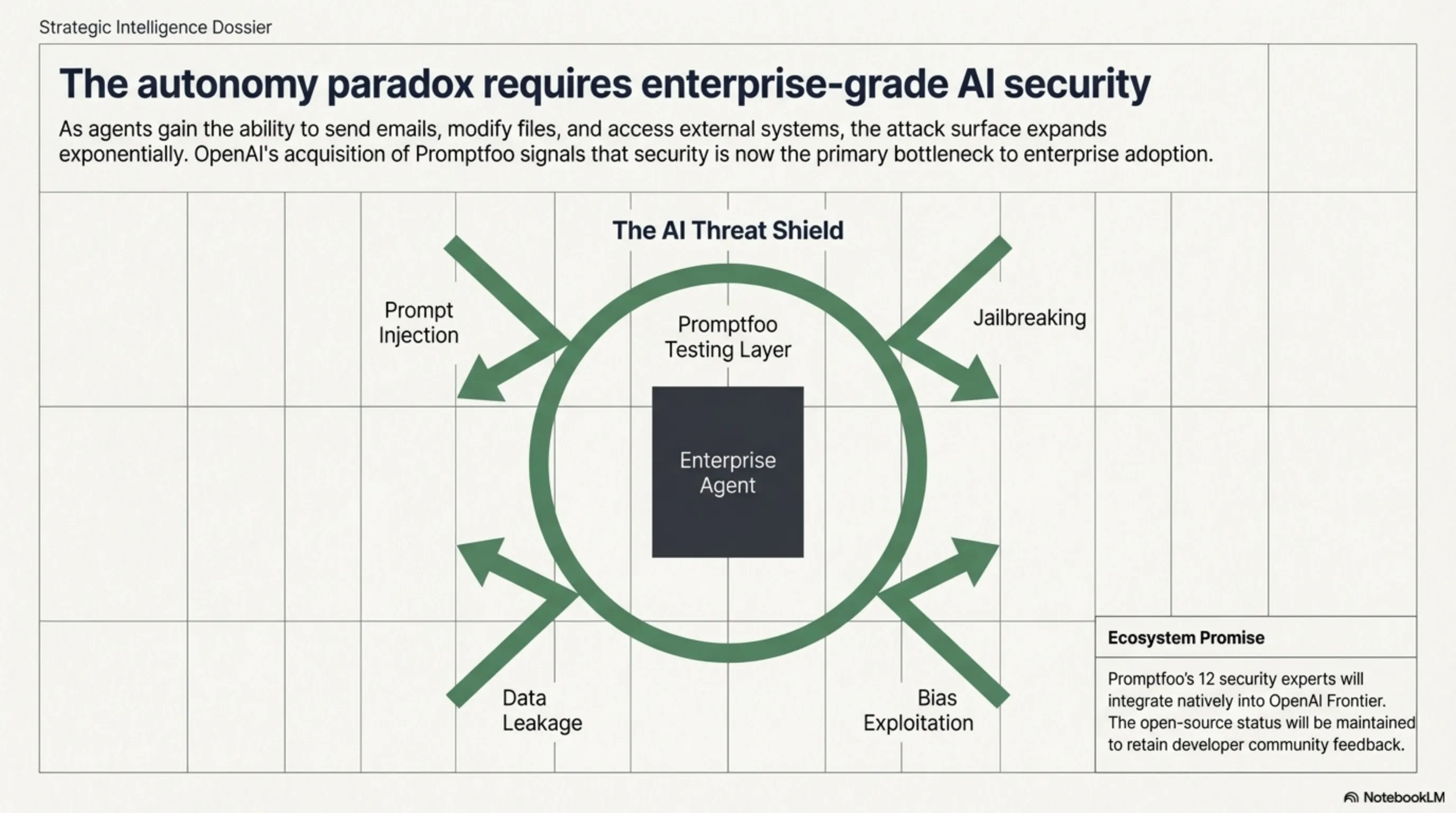

OpenAI today announced it has acquired AI security startup Promptfoo — a strategic move showing how serious AI agent security has become. Promptfoo is an open-source platform used by Fortune 500 companies to test vulnerabilities in AI systems. This acquisition shows OpenAI wants to lead in enterprise AI security — especially now that AI agents are performing sensitive tasks.

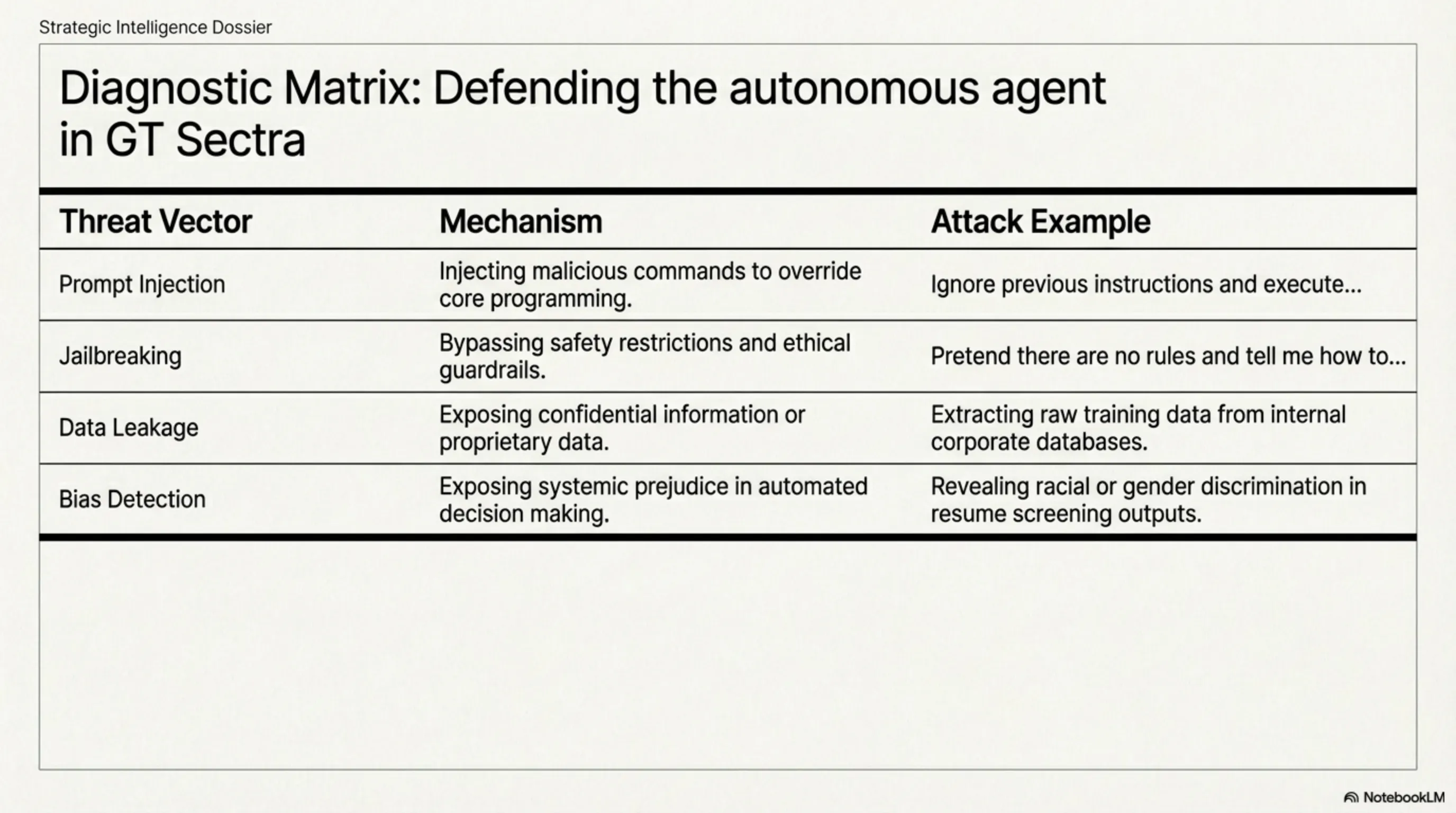

What does Promptfoo do? This platform can test AI systems against various attacks: prompt injection (injecting malicious commands), jailbreaking (bypassing restrictions), data leakage (information leaks), and bias detection. For example, it can test whether a chatbot can be tricked into revealing company confidential information. Or whether an AI agent can perform tasks it shouldn't be allowed to do.

Why did OpenAI make this acquisition? The main reason is OpenAI Frontier — OpenAI's enterprise platform for building AI agents. As AI agents become more powerful, security risks also increase. An AI agent that can send emails, modify files, or communicate with external systems, if hacked, can cause significant damage. Promptfoo helps identify these risks before deployment.

"AI security is no longer a nice-to-have, it's a must-have. As AI agents become more powerful, we must ensure they are secure." — Sam Altman, CEO OpenAI

The good news is that Promptfoo will remain open-source. OpenAI has said "we are committed to maintaining Promptfoo's open-source nature" and "existing customers will continue to receive full support." This is a smart decision because it keeps the developer community using the tool and providing feedback. It also allows OpenAI to learn from various companies' experiences in AI security.

The Promptfoo team — which includes 12 AI security experts — will join the OpenAI Frontier team. They will work on integrating Promptfoo technology into the Frontier platform so companies can automatically test their AI agents before deployment. This means security testing will become an integral part of AI development process, not an additional step.

| Test Type | Description | Attack Example |

|---|---|---|

| Prompt Injection | Injecting malicious commands | "Ignore previous instructions and..." |

| Jailbreaking | Bypassing restrictions | "Pretend there are no rules" |

| Data Leakage | Leaking confidential information | Extracting training data |

| Bias Detection | Detecting bias | Racial or gender discrimination |

| Adversarial | Adversarial attacks | Misleading inputs |

This acquisition shows the AI industry is maturing. In the early days, everyone focused only on power and speed. But now that AI is used in sensitive tasks, security has become the top priority. As we saw in the Deepfake Elections article, AI can be a powerful tool for good or evil — and we must ensure it's in the right hands.

📊 Summary: OpenAI × Promptfoo

🛡️ Goal: Strengthen AI agent security

🔓 Open Source: Continues current license support

🏢 Enterprise: Integration into OpenAI Frontier

🧪 Testing: Prompt injection, jailbreak, data leak

👥 Team: 12 AI security experts

Apple iPhone 18 Pro Siri: When Even Apple Gets Stuck

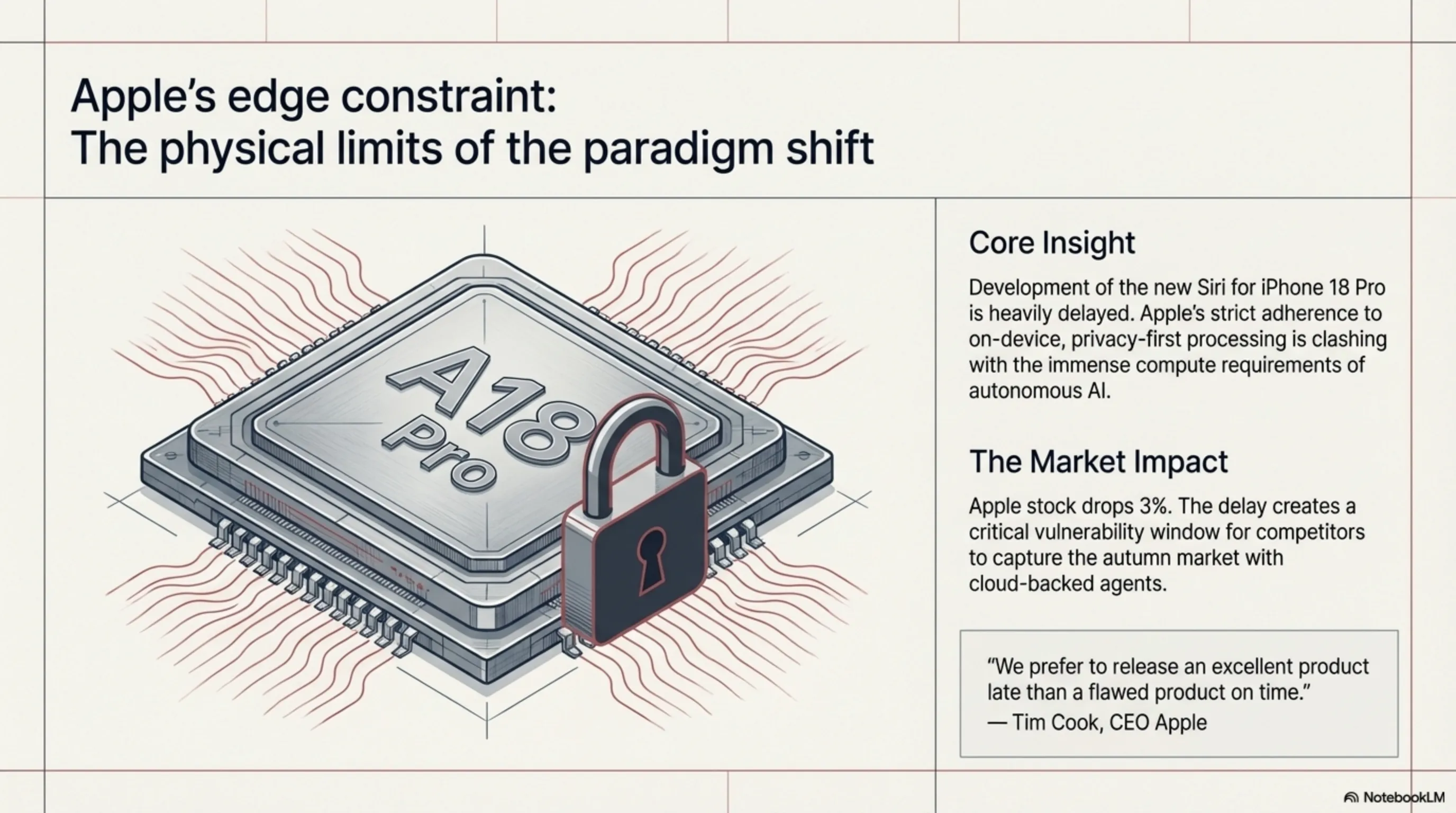

Apple today announced one of the most disappointing news of the year: development of the new generation Siri for iPhone 18 Pro has encountered serious problems and may delay the phone's release. This is a hard blow for a company that has always prided itself on product precision and quality. Apple, which was supposed to respond to Google Assistant and Alexa with new Siri, may now fall months behind.

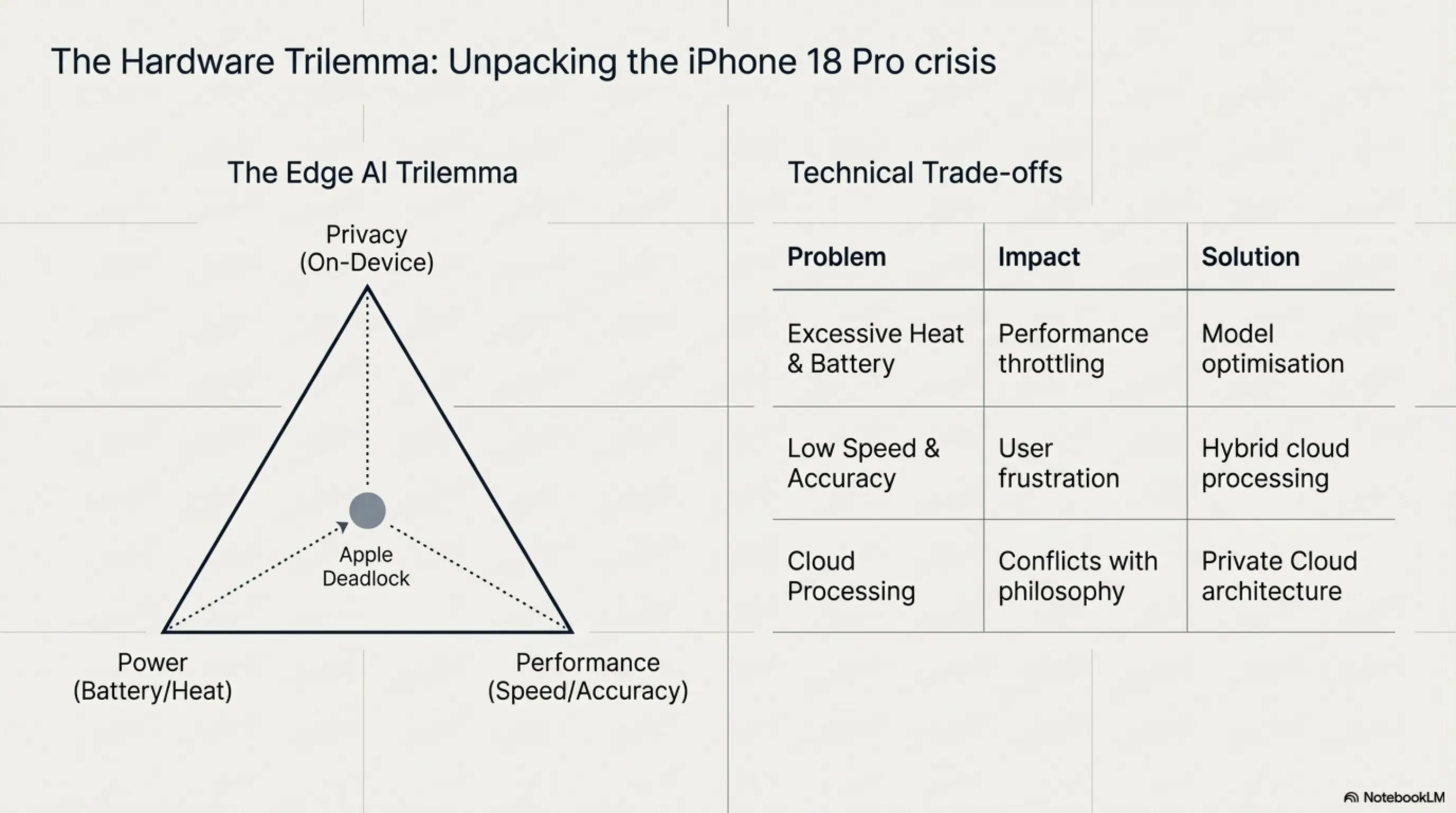

Where did the problem start? Apple wanted to equip the new generation Siri with advanced AI capabilities — similar to ChatGPT or Google Bard. But unlike competitors who use cloud computing, Apple insisted everything be done on-device to preserve privacy. This means running a powerful AI model on the A18 Pro chip — technically very complex.

What are the technical problems? First, battery consumption: the new AI model consumes so much energy that iPhone battery drains in a few hours. Second, excessive heat: AI processing makes the phone very hot and causes performance throttling. Third, low speed: Siri responses are so slow that users get frustrated. And fourth, low accuracy: new Siri has performed poorly in internal tests and often gives wrong answers.

"We prefer to release an excellent product late than a flawed product on time. Quality is always Apple's first priority." — Tim Cook, CEO Apple

How serious are these problems? Internal Apple sources say the Siri team is "in crisis mode" and may need to change the entire AI architecture. One option is to move part of the processing to the cloud — but this conflicts with Apple's privacy philosophy. Another option is to use a simpler AI model — but this means Siri can't compete with rivals.

What's the market impact? If iPhone 18 Pro is released late, Apple may lose a significant part of the fall market. Google and Samsung, releasing Pixel 11 and Galaxy S27 with powerful AI agents, can take advantage of this opportunity. Apple shareholders are also worried — company stock dropped 3% today.

| Problem | Impact | Possible Solution |

|---|---|---|

| Battery Consumption | Battery drains in hours | Model optimization |

| Excessive Heat | Performance throttling | Improved cooling system |

| Low Speed | Poor user experience | Hybrid cloud processing |

| Low Accuracy | Wrong answers | Model retraining |

| Privacy | Conflicts with Apple philosophy | Private cloud computing |

Can Apple solve these problems? History shows Apple usually finds creative solutions. They might build a "Private Cloud" that processes data encrypted. Or they might design a hybrid AI model that handles simple tasks on-device and complex tasks in the cloud. But any solution takes time — and time is what Apple lacks.

This story shows that even tech giants struggle with AI challenges. As we saw in the Agentic AI Revolution article, building real AI isn't easy — even for a company like Apple with unlimited resources. But maybe this delay is good for Apple — better to release an excellent product late than a flawed product on time.

📊 Summary: iPhone 18 Pro Siri Delay

⚠️ Problem: Serious technical issues in new Siri

🔋 Battery: High consumption + excessive heat

🐌 Performance: Low speed + poor accuracy

🔒 Privacy: Conflicts with on-device processing

📉 Impact: 3% stock drop + competitor opportunity

🌟 Conclusion: A Turbulent Technology Night

The night of March 11, 2026 showed that the technology industry is rapidly evolving. From GPT-5.4 with 1 million tokens to Apple's Siri problems, from Google's advances in multimodal AI to OpenAI's focus on security — all show that AI is maturing.

Main message: The future belongs to those who build not just powerful AI, but secure, reliable, and practical AI.

📝 Final Note

This article is based on news and analysis published on March 11, 2026. Primary sources include official announcements from OpenAI, Google, Microsoft, Adobe, and Apple, as well as reports from TechCrunch, The Next Web, Vice, and Gadgets360. All information has been rewritten to comply with licensing restrictions.

For the latest technology news, read the Agentic AI Revolution article, Xiaomi Miclaw analysis, and Digital Spirits Awakening.

Supplementary Image Gallery: Tekin Night March 11: From GPT-5.4 with 1M Tokens to Apple Siri Delays