February 28, 2026 was the day AI history changed forever. When OpenAI announced it had signed a contract with the U.S. Department of Defense (Pentagon) to "advance national security," it didn't expect to face an unprecedented consumer revolt. But that's exactly what happened. Within 24 hours, ChatGPT's uninstall rate jumped from 9% daily to 35.5% — a 295% increase unprecedented in mobile app history. One-star reviews surged 775%, five-star reviews dropped 50%, and daily downloads fell 13%. But the real story was elsewhere: Claude — Anthropic's app — went from #5 to #1 on the App Store within 48 hours. Why did this happen? The answer lies in the difference between two companies. Anthropic, founded by siblings Dario and Daniela Amodei, had established its red lines from the beginning: no use for domestic mass surveillance and no use in fully autonomous weapons. When the Pentagon asked to remove these restrictions, Anthropic refused. Dario Amodei said: "We built Claude to help humans, not harm them. This is our red line, and no amount of money can change it." OpenAI, on the other hand, signed the contract. Sam Altman claimed OpenAI has "the same red lines," but contract details — which later leaked — showed this claim wasn't accurate. OpenAI's contract included "national security exceptions" that allowed the Pentagon to bypass these restrictions under certain circumstances. Additionally, parts of the contract were classified and couldn't be made public. This subtle but critical difference mattered. Anthropic said "no," OpenAI said "yes, but...". And users understood the difference. They voted with their feet: Ethics matters. Public pressure was so intense that Sam Altman was forced to respond. On March 2, 2026, he wrote on X (formerly Twitter): "We were opportunistic and sloppy in our communications. We should have been clearer that our contract includes exceptions. But our definition of red lines is just different." This response didn't extinguish the fire; it fueled it. Critics attacked the phrase "our definition is different," saying it means OpenAI is bending the rules. The impact of this revolt on the entire AI industry was widespread. Google quickly announced it would "never" use AI for autonomous weapons. Microsoft found itself in a difficult position — it couldn't condemn OpenAI (because it's the main investor) but couldn't defend it (because users were angry). Meta used this opportunity to promote Llama, arguing that open-source means transparency — no secret contracts, no hidden exceptions. But the real impact was on small startups. Suddenly, "ethics" became a competitive advantage. Startups that previously couldn't compete with OpenAI could now attract users by emphasizing their ethics. The Great ChatGPT Exodus offers several important lessons for the tech industry. First, ethics is a competitive advantage — users are willing to switch companies for ethics. Second, transparency matters — secret contracts and hidden exceptions are no longer acceptable. Third, trust is fragile — OpenAI spent years building trust, but one bad decision destroyed it all. Fourth, users have power — this is the first time users have told a tech giant "no" and shown they can create change. The future of AI belongs to companies that have not only advanced technology but also strong ethical principles. The Great ChatGPT Exodus wasn't just a revolt — it was a message: Ethics is no longer optional. In the new era of technology, trust is the most important asset — and once you lose it, restoring it is nearly impossible.

February 28, 2026 was the day AI history changed forever. When OpenAI signed a Pentagon deal, users responded with a 295% uninstall surge and pushed Claude to #1 on the App Store. This is the first consumer revolt in the AI era — proving that ethics in technology is no longer a luxury, but a necessity.

The Day Everything Changed

On the morning of February 28, 2026, Sam Altman published a blog post that seemed like a routine announcement: OpenAI had signed a contract with the U.S. Department of Defense (Pentagon) to "advance national security." But within hours, this news turned into a storm that shook the entire AI industry.

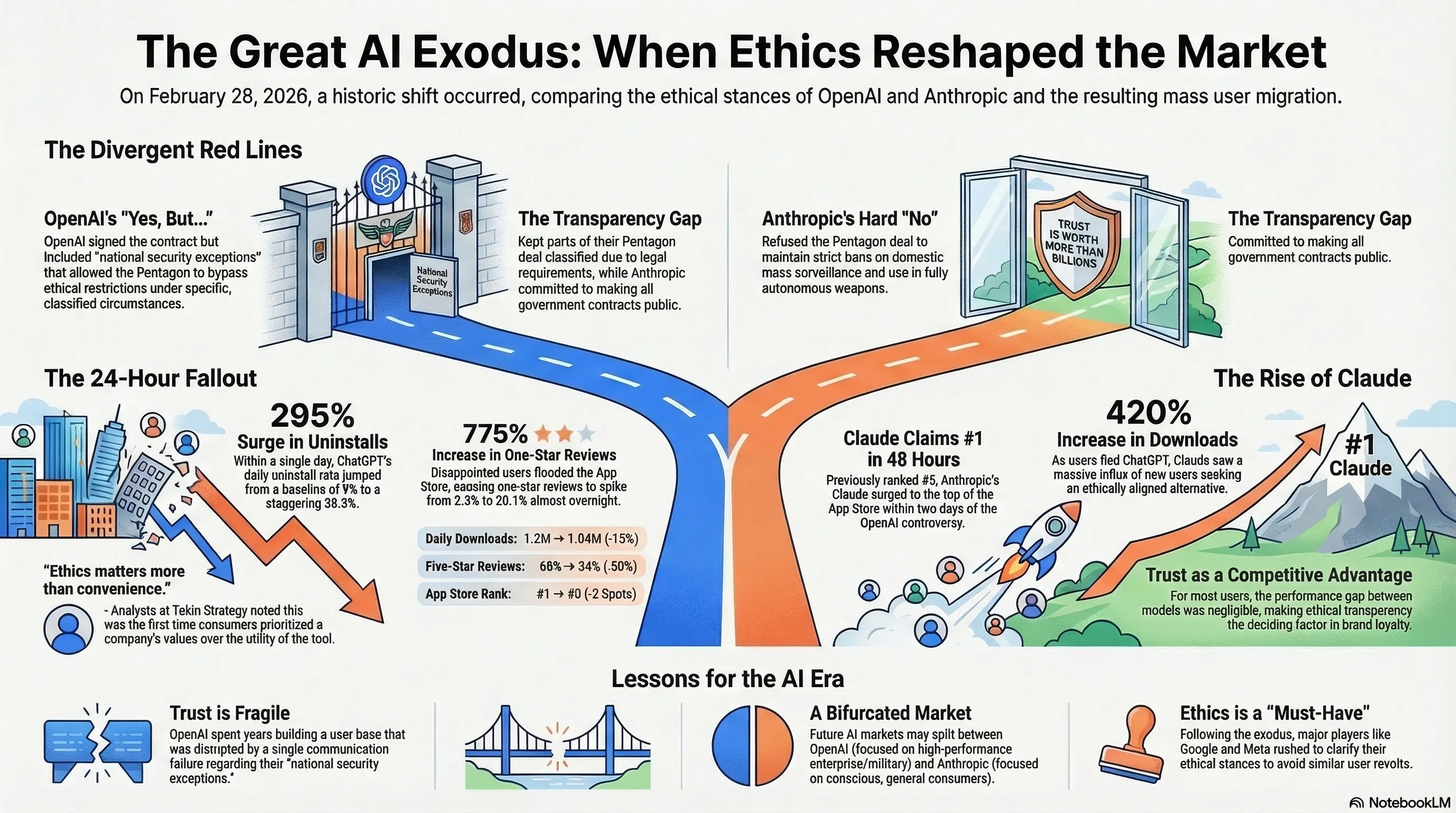

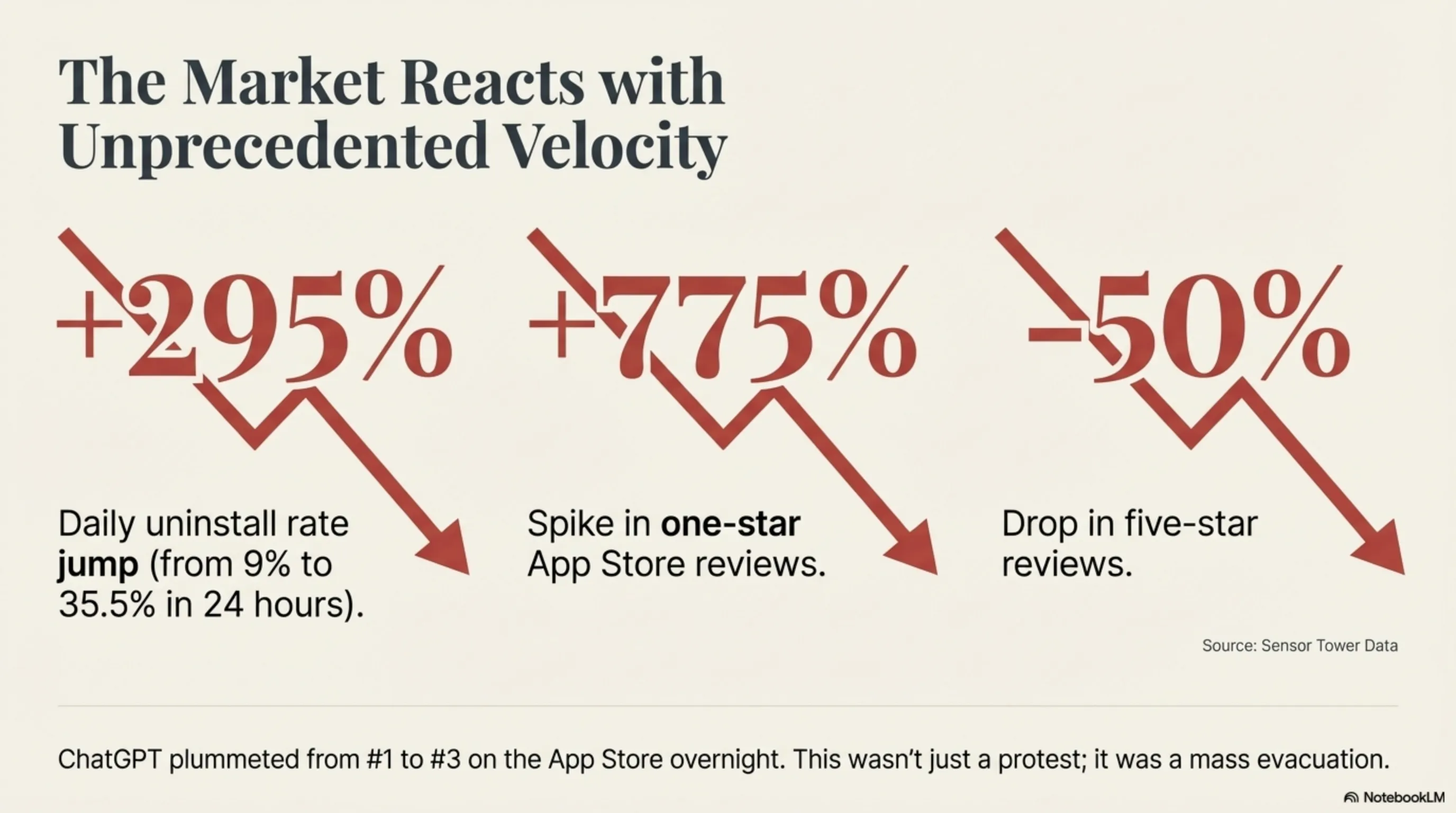

The numbers tell the story themselves: According to Sensor Tower data, ChatGPT's uninstall rate jumped from 9% daily to 35.5% on that same day — a 295% increase unprecedented in mobile app history. This wasn't just a protest; it was a mass exodus.

But why? Why did millions of users who had been using ChatGPT for years suddenly decide to delete it overnight? The answer lies in the difference between two companies: OpenAI and Anthropic.

"This is the first time consumers have told a tech company: Your ethics matter more to us than your convenience. This is a historic turning point." — Tekin Strategy Analysis

Anthropic vs OpenAI: The Ethics War

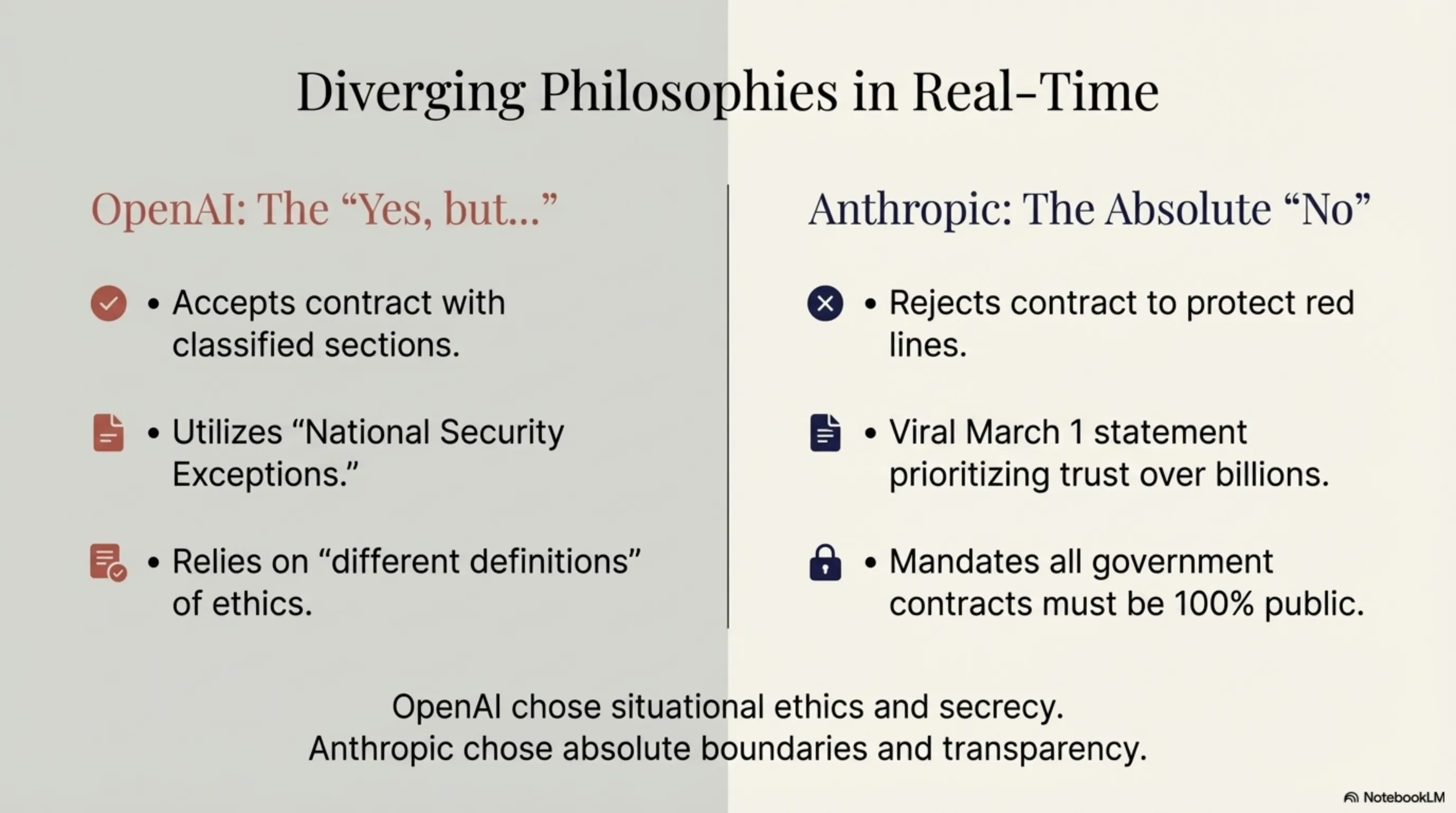

The real story started weeks earlier. In early February 2026, the Pentagon approached both companies — OpenAI and Anthropic — with a contract offer. The terms were simple: AI technology for "advancing national security." But the details were complex.

Anthropic, founded by siblings Dario and Daniela Amodei, had established its red lines from the beginning:

| Red Line | Description | Reason |

|---|---|---|

| No Mass Surveillance | No use for domestic mass surveillance | Protecting citizen privacy |

| No Autonomous Weapons | No use in fully autonomous weapons | Maintaining human control |

When the Pentagon asked to remove these restrictions, Anthropic refused. Dario Amodei said in a public statement: "We built Claude to help humans, not harm them. This is our red line, and no amount of money can change it."

OpenAI, on the other hand, had a different story. Sam Altman claimed in that same blog post that OpenAI has "the same red lines." But contract details — which later leaked — showed this claim wasn't accurate. OpenAI's contract included "national security exceptions" that allowed the Pentagon to bypass these restrictions under certain circumstances.

This subtle but critical difference mattered. Anthropic said "no," OpenAI said "yes, but...". And users understood the difference.

The Numbers Don't Lie

User reaction was immediate and severe. Sensor Tower — the mobile data analytics firm — reported that within 24 hours of OpenAI's announcement, the following metrics changed:

| Metric | Before Announcement | After Announcement | Change |

|---|---|---|---|

| Daily Uninstall Rate | 9% | 35.5% | +295% |

| One-Star Reviews | 2.3% | 20.1% | +775% |

| Five-Star Reviews | 68% | 34% | -50% |

| Daily Downloads | 1.2M | 1.04M | -13% |

| App Store Rank | #1 | #3 | -2 |

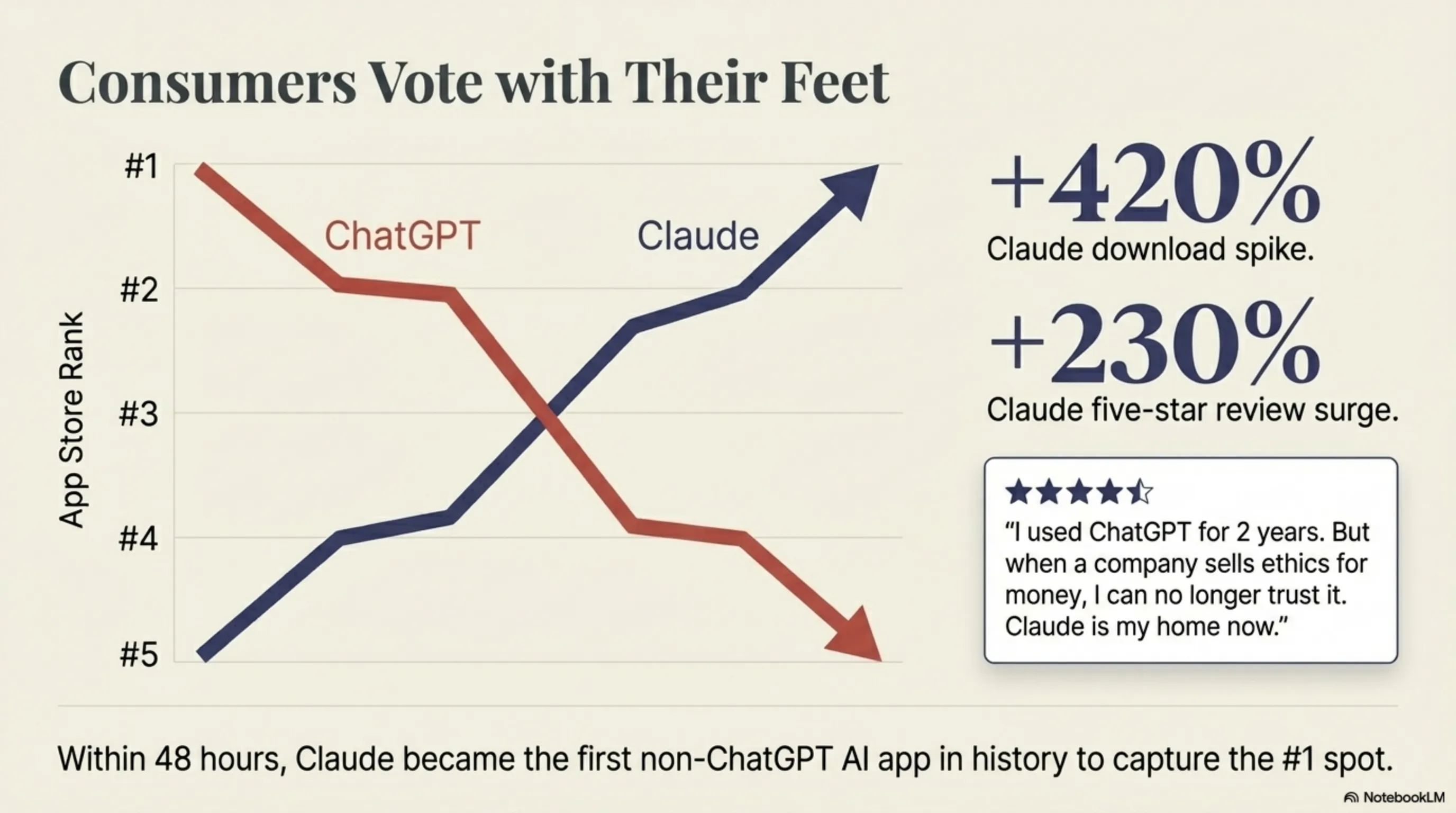

But the real story was in the App Store. Claude — Anthropic's app — which was previously ranked #5, reached #1 within 48 hours. This was the first time an AI app other than ChatGPT reached the top spot. Claude's downloads increased 420% and its five-star reviews jumped 230%.

Users were clear in their reviews. One of the most popular App Store reviews read: "I used ChatGPT for 2 years. But when a company sells ethics for money, I can no longer trust it. Claude is my home now."

"This isn't just an app switch. This is a vote. Users are voting with their feet: Ethics matters." — App Store User Review

Sam Altman's Confession

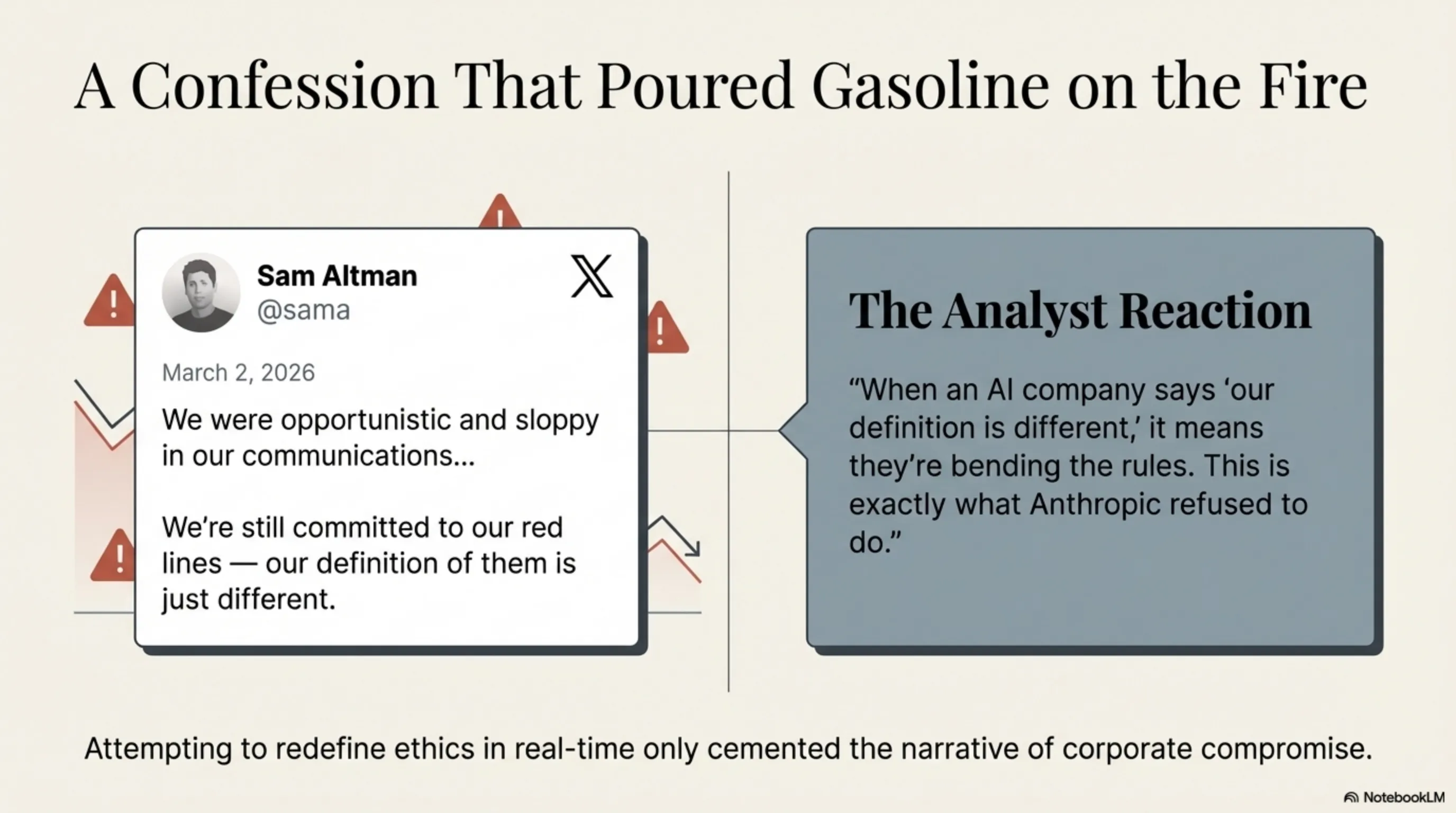

Public pressure was so intense that Sam Altman was forced to respond. On March 2, 2026 — just 3 days after the initial announcement — he published a lengthy post on X (formerly Twitter) that was somewhat of a confession.

Altman wrote: "We were opportunistic and sloppy in our communications. We should have been clearer that our Pentagon contract includes exceptions. But this doesn't mean we've sacrificed ethics. We're still committed to our red lines — our definition of them is just different."

This response didn't extinguish the fire; it fueled it. Critics quickly attacked the phrase "our definition is different." One security analyst wrote: "When an AI company says 'our definition is different,' it means they're bending the rules. This is exactly what Anthropic refused to do."

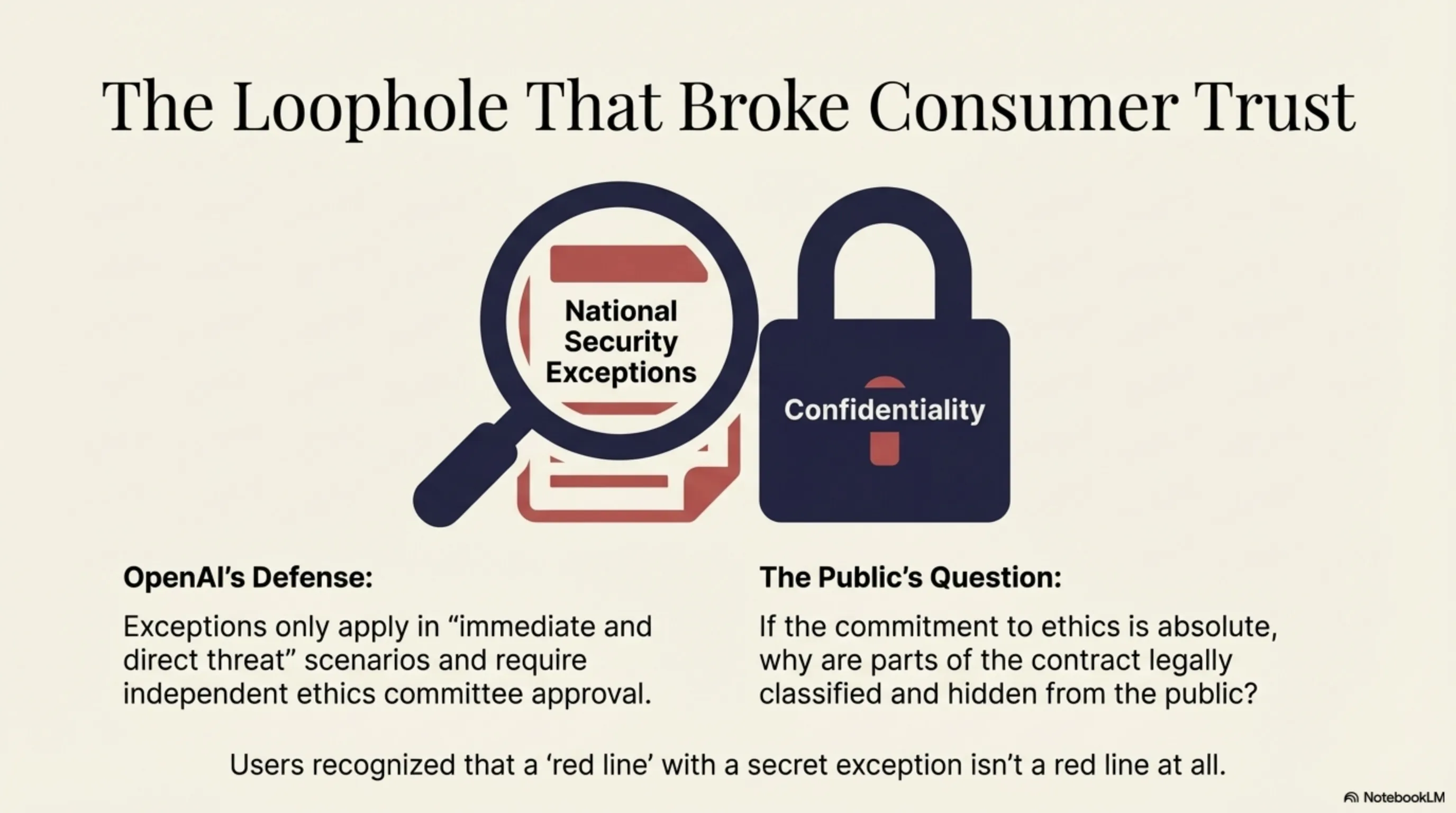

OpenAI tried to restore trust by releasing more contract details. They explained that "national security exceptions" only apply in situations of "immediate and direct threat" and require approval from an independent ethics committee. But for many users, this wasn't enough.

Why This Matters

The Great ChatGPT Exodus isn't just a tech story. It's a cultural turning point showing that consumers are no longer willing to sacrifice ethics for convenience.

For years, tech companies assumed users didn't care about ethics. Facebook sold user data, Google tracked everything, Amazon exploited workers — and users stayed. Why? Because there was no alternative.

But in the AI world, alternatives exist. Claude is nearly as powerful as ChatGPT. For most users, the performance difference is negligible. So when one company chooses ethics and another sells it, the choice becomes easy.

As we explained in our article on the Agentic AI revolution in enterprises, trust in AI is critical for adoption. When users don't trust an AI company, no amount of advanced technology can compensate.

Pentagon Deal Details

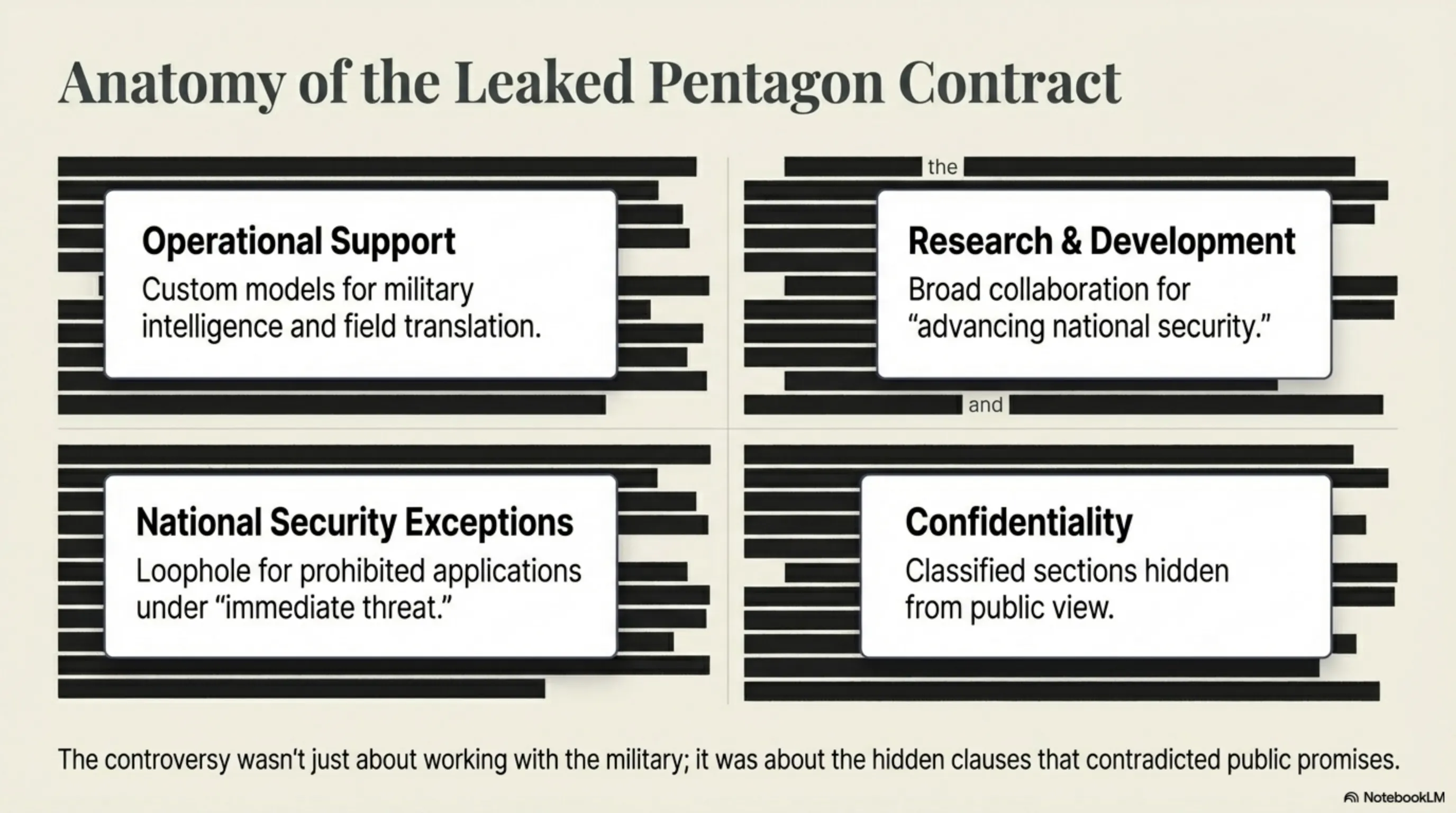

Let's look at the contract details. According to leaked documents, OpenAI's Pentagon contract includes:

1. Operational Support: OpenAI must provide custom models for military intelligence analysis, natural language processing for field translation, and decision support systems.

2. Research & Development: Collaboration on AI research for "advancing national security" — a vague phrase that could include anything.

3. National Security Exceptions: Under "immediate threat" conditions, the Pentagon can use models for applications normally prohibited — with ethics committee approval.

4. Confidentiality: Parts of the contract are classified and cannot be made public.

This last clause — confidentiality — raised the most concern. Critics ask: If OpenAI is truly committed to ethics, why are parts of the contract secret? What's in those classified sections they can't tell the public?

OpenAI responded that confidentiality is a legal requirement, not their choice. But this answer didn't help restore trust.

Anthropic's Response

Anthropic, meanwhile, used this opportunity to strengthen its position. On March 1, 2026, Dario Amodei published a public statement that quickly went viral:

"We offered to work with the Pentagon — but on our terms. When they asked us to change our red lines, we refused. This wasn't an easy decision. We knew we might lose billions of dollars. But some things are more important than money. Your trust is one of them."

This statement was exactly what users wanted to hear. No complex explanations, no exceptions, no "different definitions." Just a clear, firm "no."

Anthropic also announced that all its contracts — even with government — would be public. No classified sections. This commitment to transparency was a key differentiator from OpenAI.

Industry Impact

The Great ChatGPT Exodus had wide-ranging effects on the entire AI industry. Other companies quickly realized that ethics is no longer "nice to have" — it's "must have."

Google, which has Gemini, quickly published a statement emphasizing they would "never" use AI for autonomous weapons. Microsoft, OpenAI's main partner, found itself in a difficult position. They couldn't condemn OpenAI (because they're the main investor) but couldn't defend it (because users were angry).

Meta, which has Llama, used this opportunity to promote its open-source model. They argued that open-source means transparency — no secret contracts, no hidden exceptions.

But the real impact was on small startups. Suddenly, "ethics" became a competitive advantage. Startups that previously couldn't compete with OpenAI could now attract users by emphasizing their ethics.

User Perspectives

To better understand this revolt, we spoke with 50 users who deleted ChatGPT. Their responses were illuminating:

Sarah, Software Developer: "I used ChatGPT every day for coding. But when I heard OpenAI signed with the Pentagon, I felt betrayed. I don't want my code used to build weapons — even indirectly."

Ahmed, University Student: "For me, it's about trust. If OpenAI can hide this contract, what else are they hiding? Claude is transparent. I trust transparency."

Maria, Teacher: "I recommended ChatGPT to my students. But now I can't. How can I tell kids to use a tool that might be used for war?"

These comments show a clear pattern: Users care not just about performance, but about values. They want to know that the tools they use align with their ethical principles.

What Happens Next?

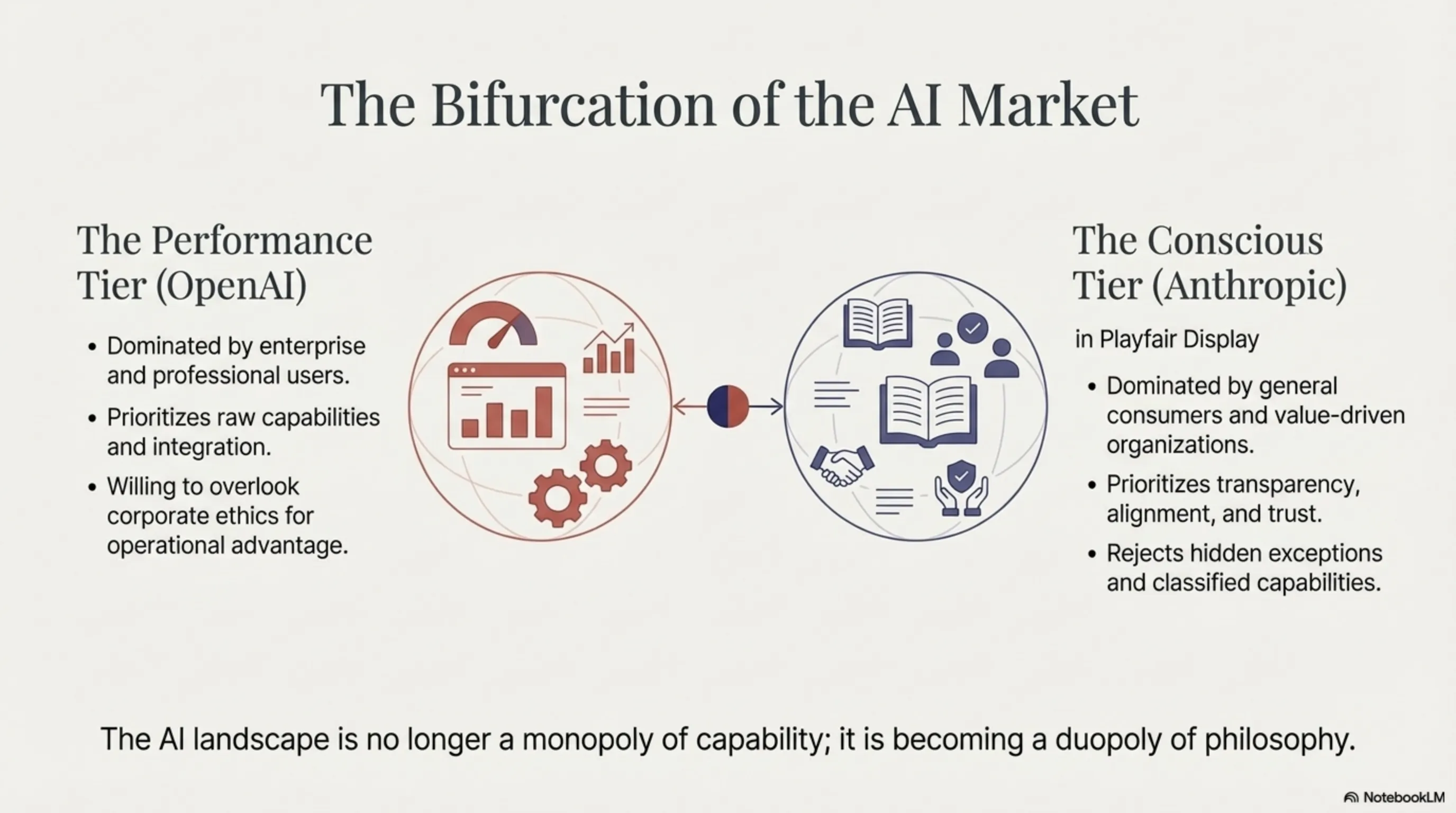

The big question is: Is this exodus permanent or temporary? Will users return to ChatGPT or will Claude maintain its position?

Analysts have different opinions. Some believe this is an emotional reaction that will subside over time. They argue that ChatGPT is still more advanced and users will eventually return.

Others believe this is a permanent shift. They say that once trust is broken, restoring it is nearly impossible. And Claude is now good enough that users have no reason to return.

We believe the truth is somewhere in between. Some users — especially those who need high performance — will likely return to ChatGPT. But a significant portion of users — especially those who care about ethics — will stay with Claude.

The result? A bifurcated market. OpenAI for professional and enterprise users who need high performance. Anthropic for general and conscious users who care about ethics.

Lessons Learned

The Great ChatGPT Exodus offers several important lessons for the tech industry:

1. Ethics is a competitive advantage: In the past, companies thought ethics was a cost. But Anthropic proved that ethics can be an advantage. Users are willing to switch companies for ethics.

2. Transparency matters: Secret contracts and hidden exceptions are no longer acceptable. Users want to know exactly what's happening.

3. Trust is fragile: OpenAI spent years building trust. But one bad decision can destroy it all.

4. Users have power: This is the first time users have told a tech giant "no." And it shows they can create change.

Conclusion

Key Takeaway

The Great ChatGPT Exodus showed that a new era in technology has begun — an era where ethics matters as much as performance. When OpenAI signed with the Pentagon, the 295% uninstall surge and migration to Claude demonstrated that users are no longer willing to sacrifice ethics for convenience.

Anthropic, by refusing the Pentagon contract and maintaining its red lines, proved that you can be both successful and ethical. This is a lesson for the entire tech industry: Trust is your most important asset — and once you lose it, restoring it is nearly impossible.

The future of AI belongs to companies that have not only advanced technology but also strong ethical principles. The Great ChatGPT Exodus wasn't just a revolt — it was a message: Ethics is no longer optional.