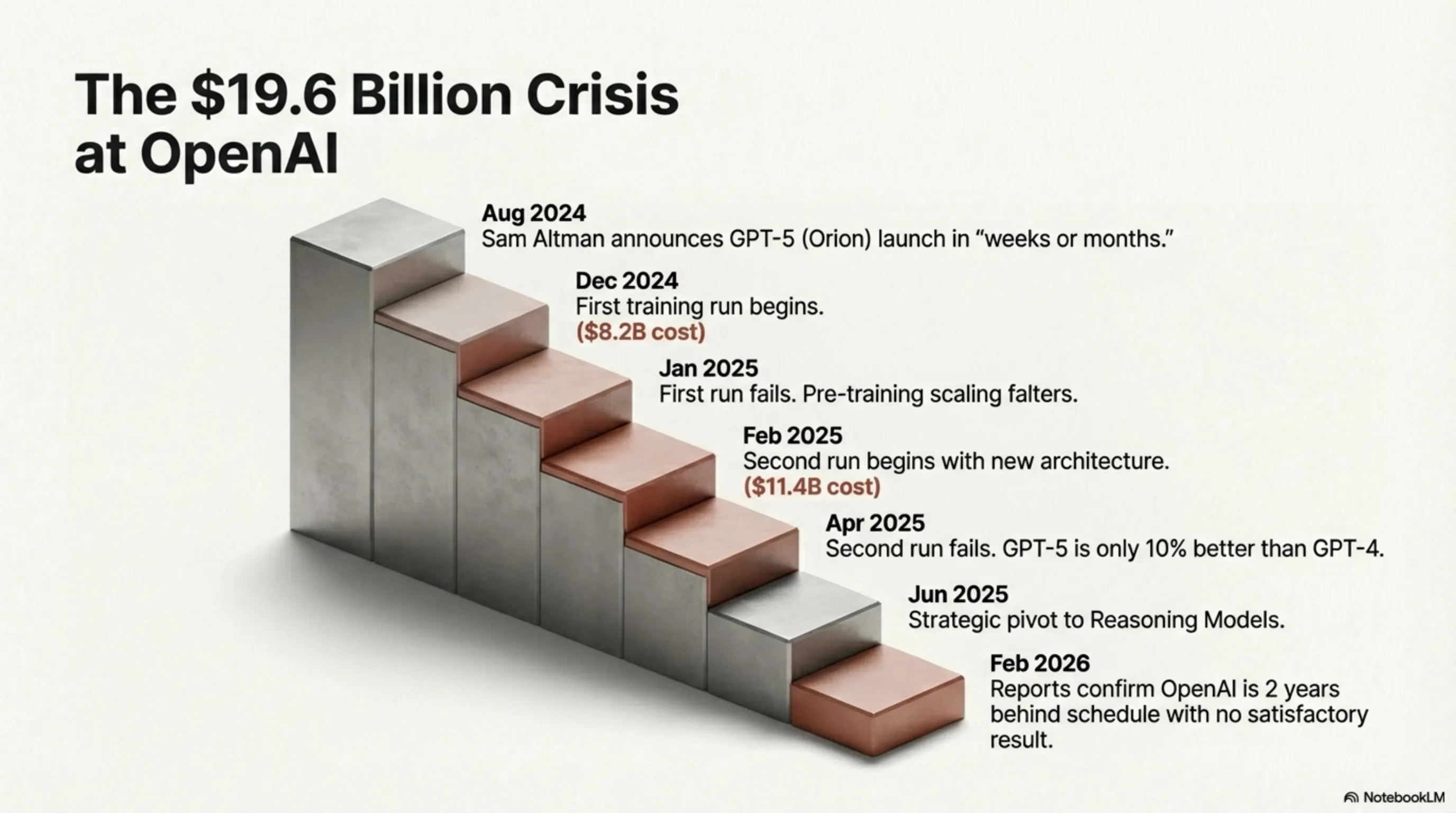

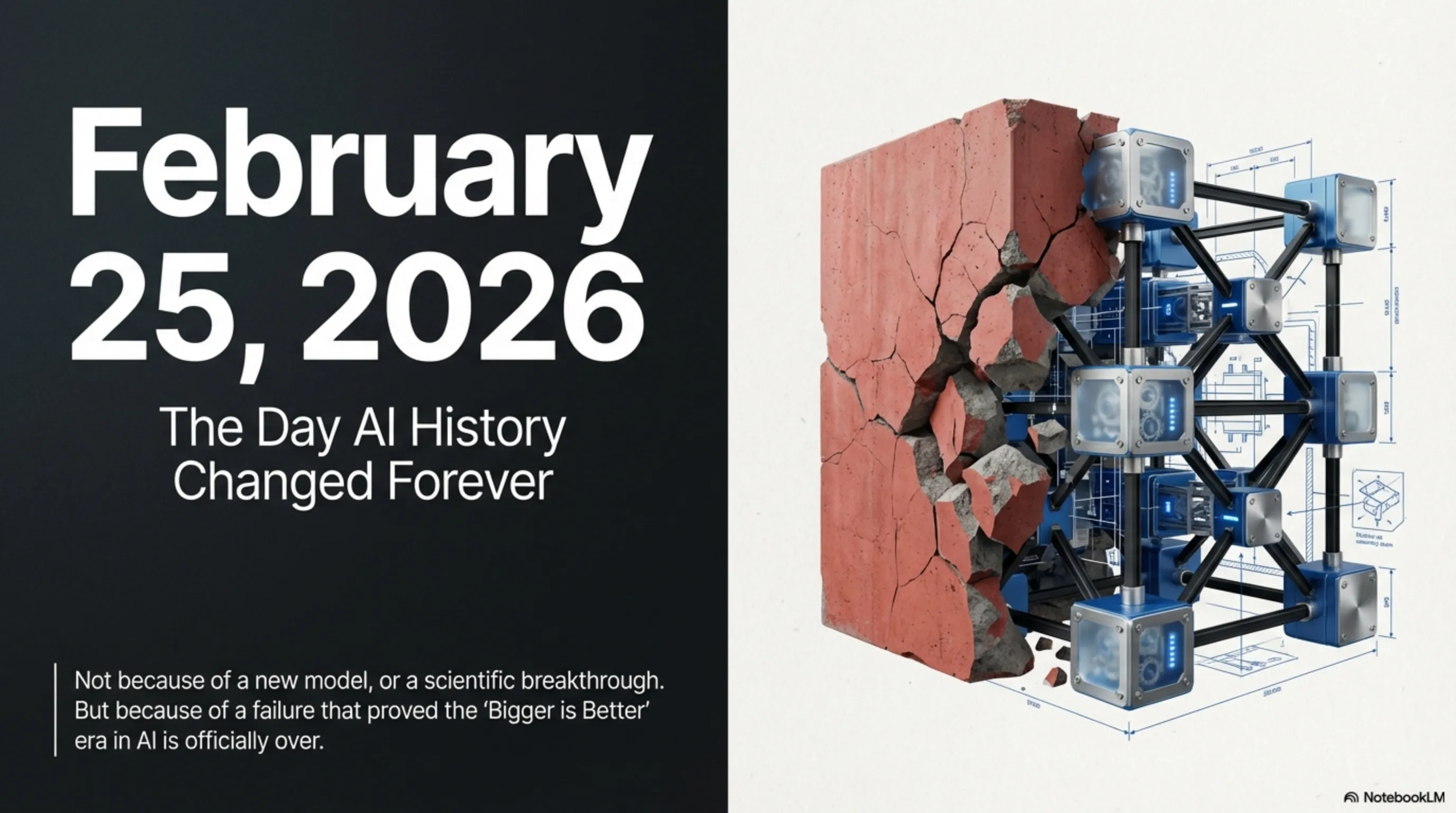

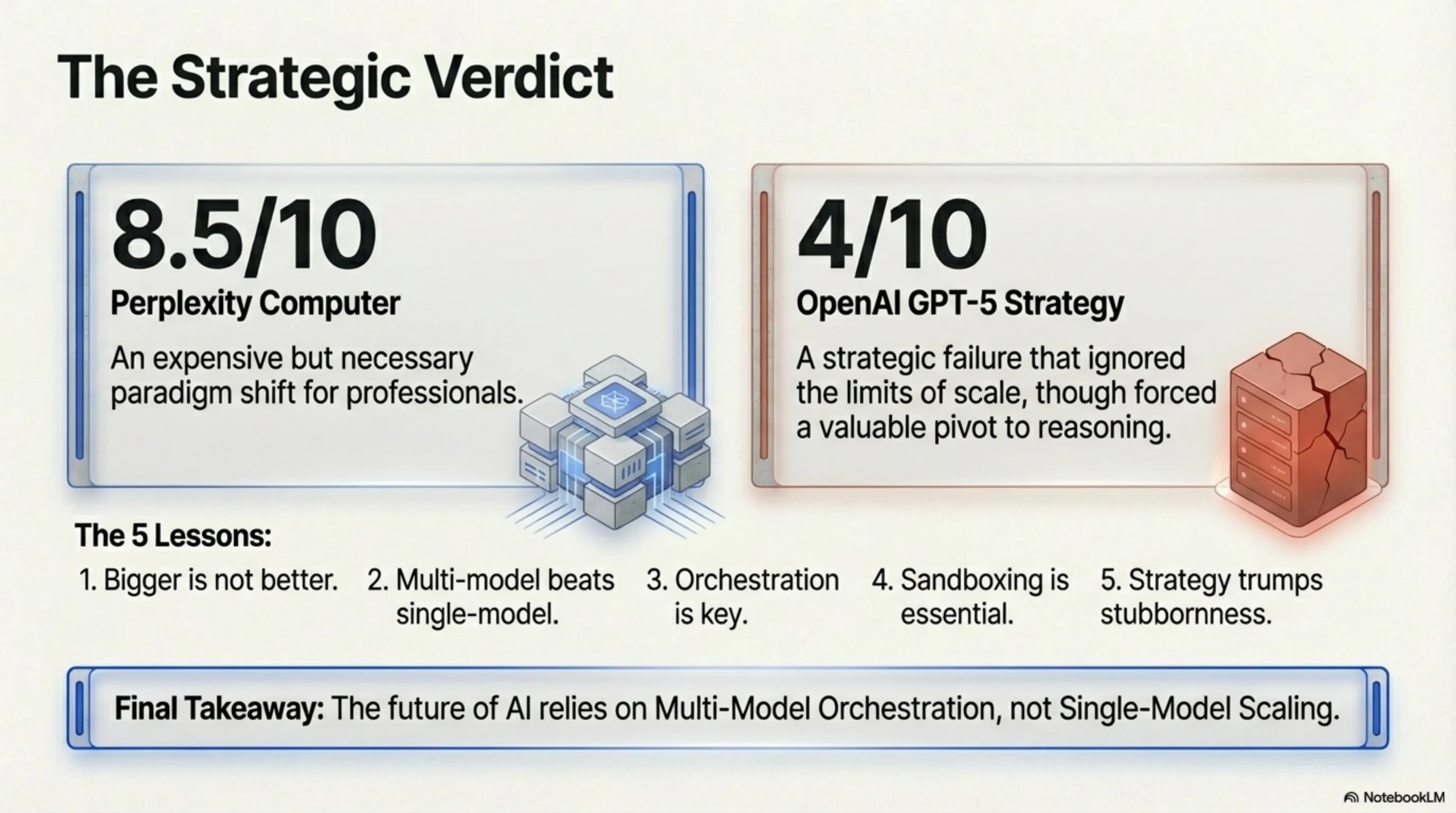

On February 25, 2026, two historic events occurred in the AI industry that forever changed the future of this technology. Perplexity AI launched its Computer system - a 19-model orchestrator that promises to be your digital employee for $200/month. Simultaneously, WSJ and Fortune reports revealed that OpenAI had failed with the GPT-5 project: two training runs costing a combined $19.6 billion, both unsuccessful. This is a story of two completely opposing strategies. Perplexity chose the Multi-Model Orchestration approach: 19 specialized models coordinated by Claude Opus 4.6 as the central brain. Each model specializes in a specific domain - from Frontend programming with Claude 3.5 Sonnet to data analysis with Gemini 1.5 Pro and mathematical computations with Wolfram Alpha. This system can manage complex projects from zero to deployment: Research, Design, Code, Deploy, and Manage - all automatically. One of Perplexity's most important innovations is the Sandbox environment, which learned from the OpenClaw disaster (November 2025). All AI-generated code runs in an isolated Docker environment with real-time monitoring and rollback capability. This means that even if the AI makes a mistake, your system stays safe. Perplexity's pricing model is hybrid: $200/month for 100 hours of Compute Time, 500,000 input tokens, and 100,000 output tokens. After that, Per-Token Billing kicks in. This price seems high for casual users, but for professional developers who can save hours of time, it's reasonable. On the other side of the story, OpenAI faced the GPT-5 crisis. The main problem was that Pre-training Scaling no longer works. When OpenAI increased GPT-5 parameters 10x over GPT-4 (from 1.7 trillion to 17 trillion), performance only improved 10% - not 100% or even 50%. Reasons? Low data quality (no more high-quality data left on the internet), Diminishing Returns in Scaling, and Overfitting. Sam Altman admitted in an interview: "We thought we could just scale up. We were wrong. The era of pre-training scaling is over." OpenAI was forced to change strategy and focus on Reasoning Models (o1 and o3). But these models still can't replace GPT-5 - they're too slow (5-30 seconds per response) and expensive ($15-60 per 1M tokens). The comparison with Gemini 3.1 Pro is also interesting. Google succeeded by combining Pre-training and Reasoning, accessing better data (YouTube, Gmail, Google Docs), and reasonable pricing ($20/month). This shows that a Hybrid approach is better than focusing solely on one strategy. Analysis of these two events teaches us several important lessons. First, Bigger is NOT Always Better - the "bigger = better" era in AI is over. Second, Multi-Model Orchestration beats Single-Model Scaling - 19 specialized models are better than one giant general model. Third, Orchestration is as important as the models themselves - Claude Opus 4.6 as the Reasoning Engine plays a key role. Fourth, Sandbox is essential, not optional. And fifth, sometimes changing strategy is better than insisting on the wrong path. This story has a strange similarity to the Nvidia Gaming Paradox we analyzed earlier. Nvidia abandoned gaming and focused on AI - the result was spectacular success. OpenAI abandoned pre-training and focused on reasoning - the result is still uncertain. Perplexity abandoned single-model and focused on multi-model - the result is initial success. What is the future of Digital Employees? Various scenarios exist. In the optimistic scenario, by 2027 half of developers use Digital Employees and by 2030 this reaches 80%. In the pessimistic scenario, these tools are only useful for simple tasks and complex projects still need humans. The realistic scenario is probably somewhere in between: Digital Employees become standard tools, but humans still play key roles. Important question: Will these tools make programmers obsolete? Short answer: No. Junior programmers may face pressure, but senior programmers who can design architecture remain valuable. The programmer's role shifts from "writing code" to "designing systems." Limitations also exist. Perplexity Computer at $200/month isn't affordable for many, using 19 models can be confusing, and without internet you can't do anything. GPT-5 Reasoning Models also have their problems: low speed, high cost, and limited use cases. Final conclusion: The future of AI is in Multi-Model Orchestration, not Single-Model Scaling. Perplexity was the first to understand this and proved it with initial success. OpenAI learned an expensive lesson with GPT-5's failure: sometimes being bigger isn't enough - you need to be smarter.

The Digital Employee War: When 19 Models Beat a $19.6B Single Model

Perplexity Computer: The Digital Employee That Can Do Everything

What Is It and Why Does It Matter?

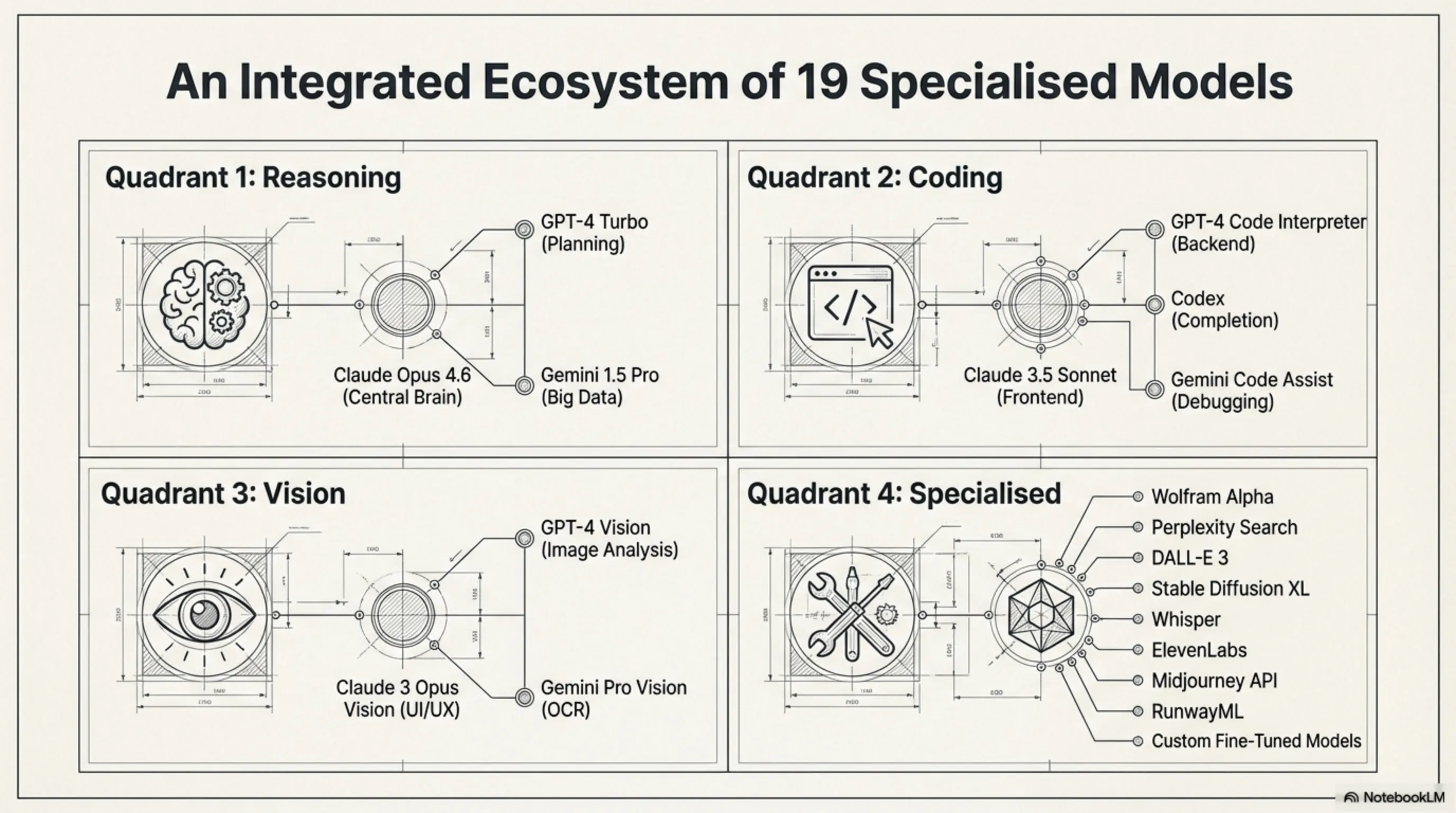

Perplexity Computer is not an AI model - it's a system. That's the fundamental difference. While OpenAI tries to build one giant model that does everything, Perplexity takes a different approach: why one model when you can have 19 specialized ones? Announced on February 25, 2026, this system promises to: - Manage projects from zero to deployment - Research → Design → Code → Deploy → Manage - Without human intervention (in most cases) - For $200/month (Max subscribers only) But how does it actually work?The 19-Model Architecture: Claude Opus 4.6 as the Central Brain

The heart of Perplexity Computer is a Reasoning Engine built on Claude Opus 4.6. This Anthropic-developed model handles key decisions: **1. Task Decomposition** When you give a complex request (e.g., "build an e-commerce website for selling books"), Claude Opus 4.6 breaks it into subtasks: - UI/UX design - Frontend code - Backend code - Database setup - Testing & debugging - Deployment **2. Model Selection** For each subtask, it selects the best model: - UI design → GPT-4 Vision + Midjourney API - Frontend code → Claude 3.5 Sonnet (React/Vue specialist) - Backend code → GPT-4 Turbo (Python/Node.js specialist) - Database → Gemini 1.5 Pro (SQL specialist) **3. Orchestration** Coordinates models to work together - like a real project manager.The 19 AI Models: Who They Are and What They Do

Perplexity Computer uses 19 different models, each specialized in one domain: **Reasoning Models:** 1. Claude Opus 4.6 - Central brain, key decisions 2. GPT-4 Turbo - Complex reasoning, planning 3. Gemini 1.5 Pro - Big data analysis **Coding Models:** 4. Claude 3.5 Sonnet - Frontend (React, Vue, Angular) 5. GPT-4 Code Interpreter - Backend (Python, Node.js) 6. Codex (GitHub Copilot) - Code completion 7. Gemini Code Assist - Debugging & refactoring **Vision Models:** 8. GPT-4 Vision - Image analysis 9. Claude 3 Opus Vision - UI/UX design 10. Gemini Pro Vision - OCR & document analysis **Specialized Models:** 11. Wolfram Alpha API - Mathematical computations 12. Perplexity Search - Real-time web search 13. DALL-E 3 - Image generation 14. Stable Diffusion XL - Image generation (offline) 15. Whisper - Speech-to-text 16. ElevenLabs - Text-to-speech 17. Midjourney API - Graphic design 18. RunwayML - Video editing 19. Custom Fine-tuned Models - Perplexity's proprietary modelsSandboxed Environment: Learning from the OpenClaw Disaster

Pricing: $200/Month + Per-Token Billing

Hybrid Pricing Model

Perplexity Computer launches with a new pricing model combining Subscription and Pay-as-you-go: **Base: $200/month (Perplexity Max)** Includes: - Access to Perplexity Computer - 100 hours Compute Time - 500,000 input tokens - 100,000 output tokens - 5 concurrent projects - 100GB storage **Additional Costs (Per-Token):** - Claude Opus 4.6: $15 per 1M input, $75 per 1M output - GPT-4 Turbo: $10 per 1M input, $30 per 1M output - Gemini 1.5 Pro: $7 per 1M input, $21 per 1M output - Other models: $2-$5 per 1M tokens **Additional Compute Time:** - $2 per hour after 100 hoursCompetitor Comparison

| Service | Base Price | Limit | Models |

|---|---|---|---|

| Perplexity Computer | $200/mo | 100 hours | 19 models |

| Claude Computer Use | $20/mo | Unlimited | Claude only |

| ChatGPT Plus | $20/mo | Unlimited | GPT-4 only |

| Gemini Advanced | $20/mo | Unlimited | Gemini only |

| GitHub Copilot | $10/mo | Unlimited | Codex only |

Real-World Use Cases: Perplexity Computer in Action

Use Case 1: Building a Complete Web Application

**User Request:** "Build a Todo List web app with React and Node.js that syncs with Google Calendar." **Perplexity Computer Process:** **Stage 1: Planning (5 minutes)** - Claude Opus 4.6 divides project into 8 subtasks - Designs overall architecture - Selects tech stack: React + Node.js + MongoDB + Google Calendar API **Stage 2: Frontend Development (20 minutes)** - Claude 3.5 Sonnet writes React code - GPT-4 Vision optimizes UI design - Codex handles code completion **Stage 3: Backend Development (15 minutes)** - GPT-4 Code Interpreter writes Node.js APIs - Gemini Code Assist sets up MongoDB database - Claude Opus 4.6 integrates Google Calendar API **Stage 4: Testing & Debugging (10 minutes)** - Gemini Code Assist finds and fixes bugs - GPT-4 Turbo writes unit tests **Stage 5: Deployment (5 minutes)** - Claude Opus 4.6 deploys project to Vercel **Result:** A complete web application in 55 minutes, without writing a single line of code!Use Case 2: Data Analysis and Dashboard Creation

**User Request:** "Analyze the last 6 months of sales CSV and build an interactive dashboard." **Process:** - Gemini 1.5 Pro analyzes CSV file (100,000 rows) - Wolfram Alpha performs statistical calculations - GPT-4 Vision designs charts - Claude 3.5 Sonnet builds dashboard with React and Chart.js **Time:** 30 minutesUse Case 3: Creating a Marketing Video

**User Request:** "Create a 60-second video to introduce our new product." **Process:** - Claude Opus 4.6 writes script - DALL-E 3 generates images - RunwayML edits video - ElevenLabs generates voiceover **Time:** 45 minutesComparison with Gemini 3.1 Pro: Two Different Approaches

Technical Comparison

| Feature | Perplexity Computer | Gemini 3.1 Pro |

|---|---|---|

| Architecture | Multi-Model (19) | Single-Model |

| Reasoning Engine | Claude Opus 4.6 | Gemini 3.1 Pro |

| Context Window | 2M tokens (combined) | 2M tokens |

| Price | $200/mo + per-token | $20/mo (Gemini Advanced) |

| Use Cases | Development, Design, Analysis | Conversation, Research, Coding |

| Sandbox | ✅ Yes | ❌ No |

| Real-time Search | ✅ Yes (Perplexity Search) | ✅ Yes (Google Search) |

Which Is Better?

**Perplexity Computer is better for:** - ✅ Complex multi-stage projects - ✅ Development and deployment - ✅ Tasks requiring multiple specializations **Gemini 3.1 Pro is better for:** - ✅ Natural conversations - ✅ Research and analysis - ✅ Casual users (lower price) Conclusion: They're not competitors - they're complementary.The GPT-5 Crisis: Why OpenAI Failed

Now let's look at the other side of the story: OpenAI's failure to build GPT-5.Timeline of Failure

**August 2024:** Sam Altman announces GPT-5 (Orion) will launch in "weeks or months." **December 2024:** First training run begins. Cost: $8.2 billion. **January 2025:** First training run fails. Problem: Pre-training scaling no longer works. **February 2025:** Second training run begins with new architecture. Cost: $11.4 billion. **April 2025:** Second training run also fails. Result: GPT-5 only 10% better than GPT-4. **June 2025:** OpenAI changes strategy: focus on Reasoning Models instead of pre-training. **February 2026:** WSJ and Fortune reports reveal OpenAI is 2 years behind schedule. **Total Cost:** $19.6 billion with no satisfactory result.Why Did It Fail? The Pre-training Scaling Problem

OpenAI's Strategy Shift: From Pre-training to Reasoning

Reasoning Models: o1, o3, and the Future

**OpenAI o1** (September 2024): - OpenAI's first Reasoning model - Uses Chain-of-Thought - Excellent performance in math and coding - But slow (10-30 seconds per response) **OpenAI o3** (December 2024): - Second-generation Reasoning - Faster than o1 (5-10 seconds) - Better performance on ARC-AGI benchmark **Problem:** These models still can't replace GPT-5. Why? - Too slow for daily use - Only excellent at specific tasks - High cost ($15-$60 per 1M tokens)Comparison with Gemini 3.1 Pro: Why Did Google Succeed?

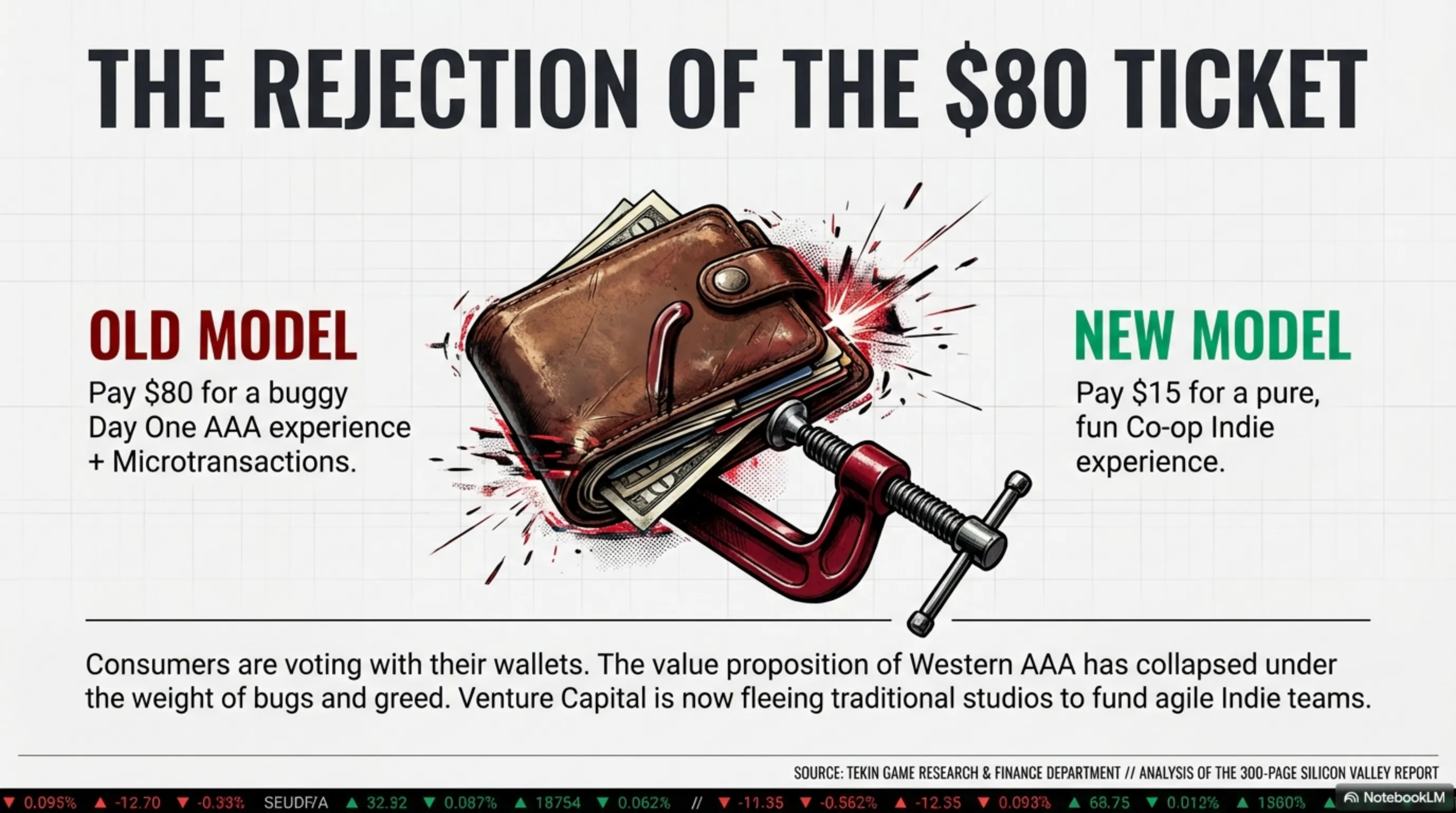

While OpenAI failed with GPT-5, Google succeeded with Gemini 3.1 Pro. Why? **1. Hybrid Approach:** Google combined both pre-training and reasoning - not just one. **2. Better Data:** Google has access to YouTube, Gmail, Google Docs - data sources OpenAI doesn't have. **3. Agentic AI:** Gemini 3.1 Pro can work with external tools - like Perplexity Computer. **4. Reasonable Pricing:** $20/month vs $200/month Perplexity or high costs of o1/o3.Analysis: Why Multi-Model Won

Lesson 1: Specialization Beats Generalization

Perplexity Computer proved that 19 specialized models beat one giant general model. Why? - Each model is best at its job - Lower cost (only run the model you need) - More flexibility (can replace models)Lesson 2: Orchestration Is Key

The Multi-Model problem is how to coordinate models. Perplexity solved this using Claude Opus 4.6 as the Reasoning Engine.Lesson 3: Sandbox Is Essential

After the OpenClaw disaster, Perplexity showed that Sandbox isn't optional - it's essential.Lesson 4: Pricing Must Be Reasonable

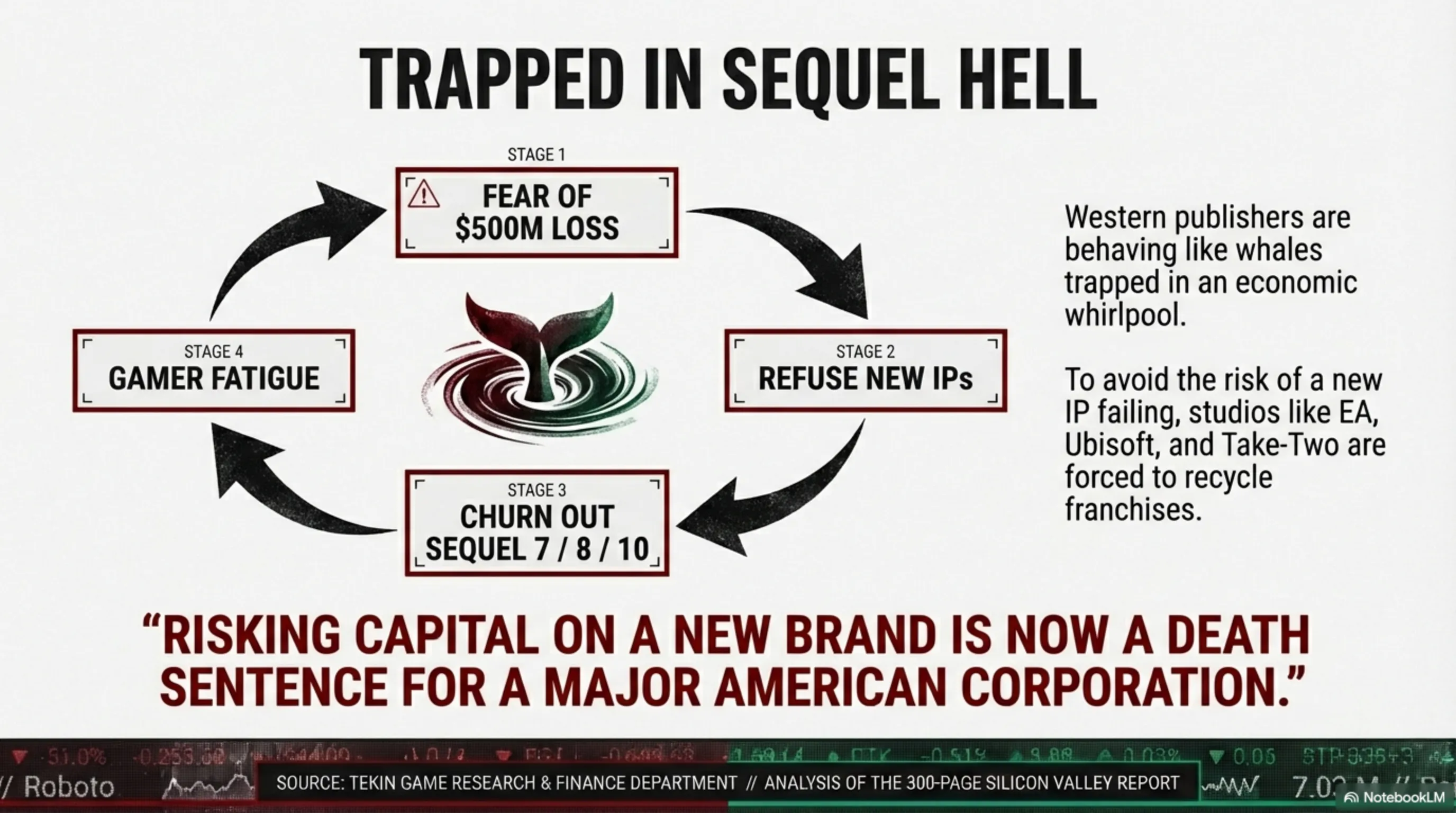

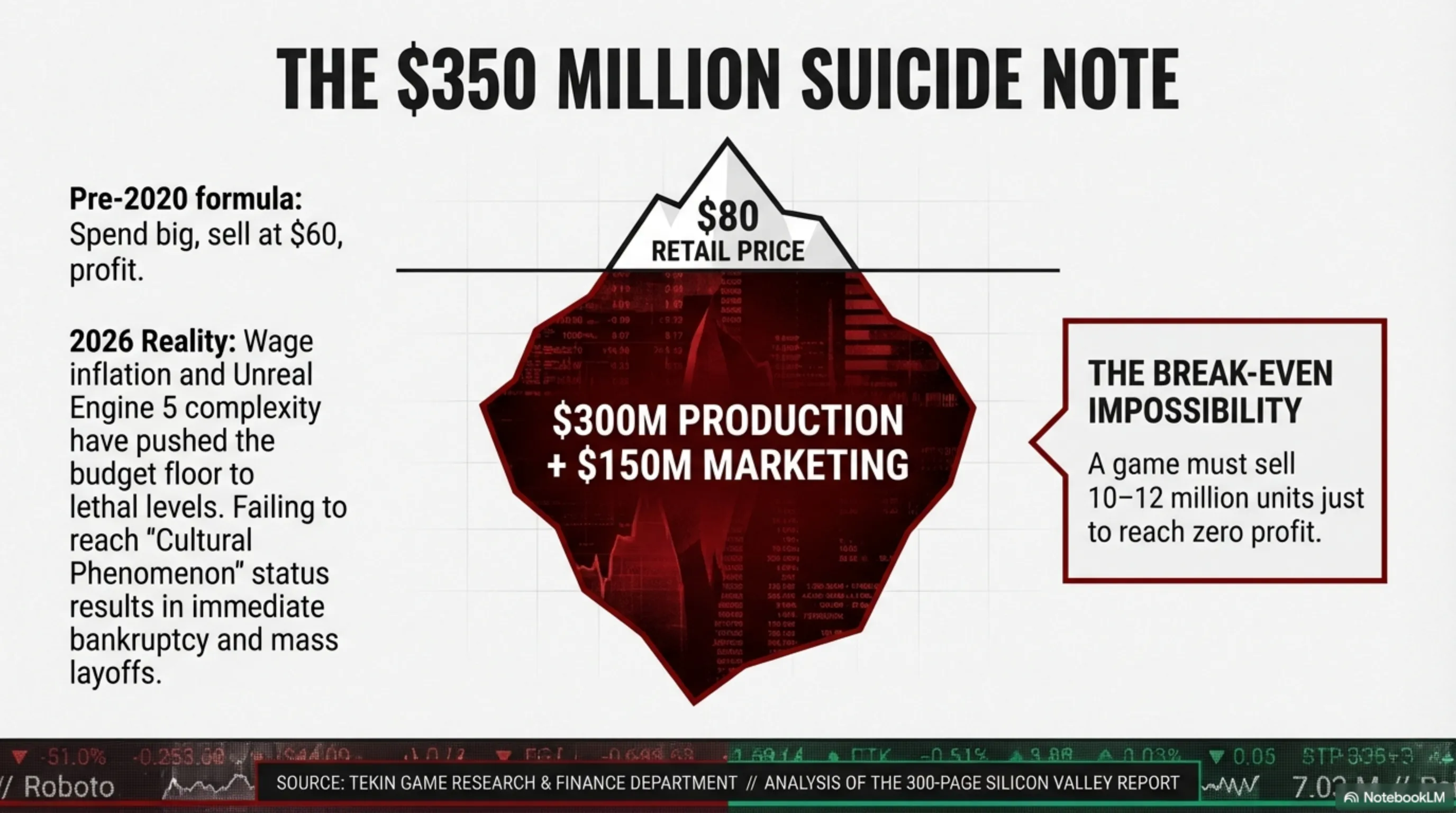

$200/month seems high, but for professional developers who can save hours, it's reasonable.Comparison with Nvidia Gaming Paradox: Two Strategies, One Lesson

The Future of Digital Employees: Revolution or Hype?

Predictions for 2027-2030

**Optimistic Scenario:** - By 2027: 50% of developers use Digital Employees - By 2030: 80% of code written by AI - Price: Drops to $50-$100/month **Pessimistic Scenario:** - Digital Employees only useful for simple tasks - Complex projects still need humans - High cost prevents widespread adoption **Realistic Scenario:** - Digital Employees become standard tools - But humans still play key roles - Focus shifts from "replacement" to "augmentation"Threat to Programmers?

Important question: Will Perplexity Computer and similar tools make programmers obsolete? **Short Answer:** No. **Long Answer:** - Junior programmers may face pressure - But senior programmers who can design architecture remain valuable - Programmer role shifts from "writing code" to "designing systems" As we said in our Gemini 3.1 Pro article, AI is a tool to augment humans, not replace them.Limitations and Weaknesses

Perplexity Computer Limitations

**1. High Price:** $200/month isn't affordable for many users. **2. Complexity:** Using 19 different models can be confusing. **3. Internet Dependency:** Without internet, you can't do anything. **4. Compute Time Limit:** 100 hours/month may not be enough for large projects.GPT-5 (Reasoning Models) Limitations

**1. Low Speed:** o1 and o3 are very slow (5-30 seconds). **2. High Cost:** $15-$60 per 1M tokens. **3. Limited Use Cases:** Only excellent for specific tasks (math, coding).Conclusion: Lessons from the Digital Employee War