Tekin Night (Feb 17, 2026): Dissecting 6 strategic shifts in the tech world. From the Silicon Valley Red Alert on the chip crisis to Anthropic’s controversial Pentagon alliance and Amazon's 100 fully autonomous warehouses. A deep dive by the Tekin Army into the future of cybernetic governance and AI trust.

Table of Contents

- News 1: Silicon Valley Red Alert

- News 2: Pentagon vs Anthropic

- News 3: Boston Dynamics Robot Army

- News 4: Microsoft Agent Trust Signals

- News 5: Amazon Autonomous Logistics

- News 6: The Trust Crisis

News 1: Silicon Valley Red Alert

At the Davos 2026 summit, Elon Musk and Tim Cook issued a joint warning about the semiconductor chip crisis. This warning, following years of global chip shortages, highlights the tech industry's deep dependence on vulnerable supply chains.

Musk emphasized that Tesla's electric vehicle production and AI systems have been severely impacted by the shortage of advanced chips. Cook also spoke of Apple's challenges in sourcing A-series and M-series chips. Both leaders called for increased investment in domestic production and reduced dependence on Taiwan and South Korea.

This crisis has not only slowed production but increased manufacturing costs by up to 30%. Analysts predict this situation will continue until 2027 unless major investments are made in new fabrication facilities.

News 2: Pentagon vs Anthropic

In tonight's Tekin Army vigil, we dissect one of tech's most explosive confrontations: the Pentagon's threat to sever ties with Anthropic, maker of the Claude language model, over ethical restrictions on military AI use. First reported by Axios, this isn't just a contract dispute—it's an earthquake rippling through the AI ecosystem. A deal worth up to $200 million (with unofficial reports citing figures as high as $500 million), signed last summer, now teeters on the brink of cancellation.

The Pentagon, exhausted from protracted negotiations, has laid all options on the table, including complete termination of the partnership. Why? Anthropic insists on stringent restrictions against fully autonomous weapons and mass domestic surveillance, while the Pentagon demands access for "all lawful purposes," including weapons development, intelligence gathering, and field operations. This clash traces back to the Claude jailbreak saga, where the model resisted potential misuse, cementing Anthropic's ideological stance.

Economically, this Pentagon threat could mark a turning point for Anthropic. Last summer's initial contract positioned Claude as the first AI model integrated into the Pentagon's classified networks. Valued at up to $200 million, with unofficial reports suggesting higher figures like $500 million, cancellation would not only sever direct revenue but signal to investors: Anthropic, valued at over $18 billion, risks stalling its growth trajectory.

Defense officials admit replacing Claude is "challenging," as competitors like OpenAI lag in government applications. This forces the Pentagon to reinvest in alternative models, with ancillary costs potentially reaching hundreds of millions. Immediate revenue loss disrupts cash flow and may trigger engineering team reductions.

Now to the technical core: Claude, Anthropic's language model, is built on Constitutional AI architecture, featuring deeper safety layers than competitors. The model operates on "constitutional" principles, where AI self-evaluates and corrects based on predefined ethical rules. In recent jailbreak incidents, Claude resisted attempts to bypass restrictions, such as rejecting requests related to autonomous weapons or mass surveillance.

Claude 3.5 Sonnet, with over 400 billion parameters, leverages optimized Transformers with MoE (Mixture of Experts). Safety layers include output watermarking and dynamic guardrails that block use in fully autonomous weapons. In jailbreak tests, Claude reduced attack success rates below 5%, while GPT-4o shows approximately 20% vulnerability.

From a security perspective, this dispute transcends contracts: the Pentagon needs Claude for sensitive operations, but Anthropic imposes restrictions on mass domestic surveillance and autonomous weapons. This cultural clash brands Anthropic as the "most ideological" lab. The Pentagon may pivot to less secure competitor models, increasing jailbreak and data leak risks.

Ultimately, this Pentagon vs Anthropic confrontation defines military AI's future: will ethics triumph over national security, or vice versa? The Tekin Army watches, and this is just the beginning.

News 3: Boston Dynamics Robot Army

Boston Dynamics, the robotics pioneer, announced it has upgraded its humanoid robot Atlas to an all-electric version. This transformation marks the end of the hydraulic era and the beginning of a new age of agile, efficient robotics.

The new Atlas, with 56 degrees of freedom, can perform complex movements previously impossible. The new electric system has not only reduced the robot's weight by 40% but increased energy efficiency by up to 60%. This robot can carry loads up to 25 kilograms and work in industrial environments.

The partnership with Hyundai has paved the way for commercialization. It's projected that by 2028, over 1,000 Atlas units will be deployed in automotive factories. These robots can perform dangerous and repetitive tasks, increasing worker safety.

News 4: Microsoft Agent Trust Signals

Microsoft has introduced a new system for building trust in AI agents. This system, built on Azure Observability Agent and Conditional Access, ensures the security and reliability of AI agents.

The new security architecture includes multiple authentication layers, real-time monitoring of agent behavior, and threat detection mechanisms. This system can identify and block suspicious behaviors in less than 100 milliseconds.

Economically, this system is critical for large organizations dependent on AI agents. Microsoft claims this solution can reduce security costs by up to 45% while increasing reliability. Over 500 Fortune 500 companies are currently testing this system.

News 5: Amazon Autonomous Logistics

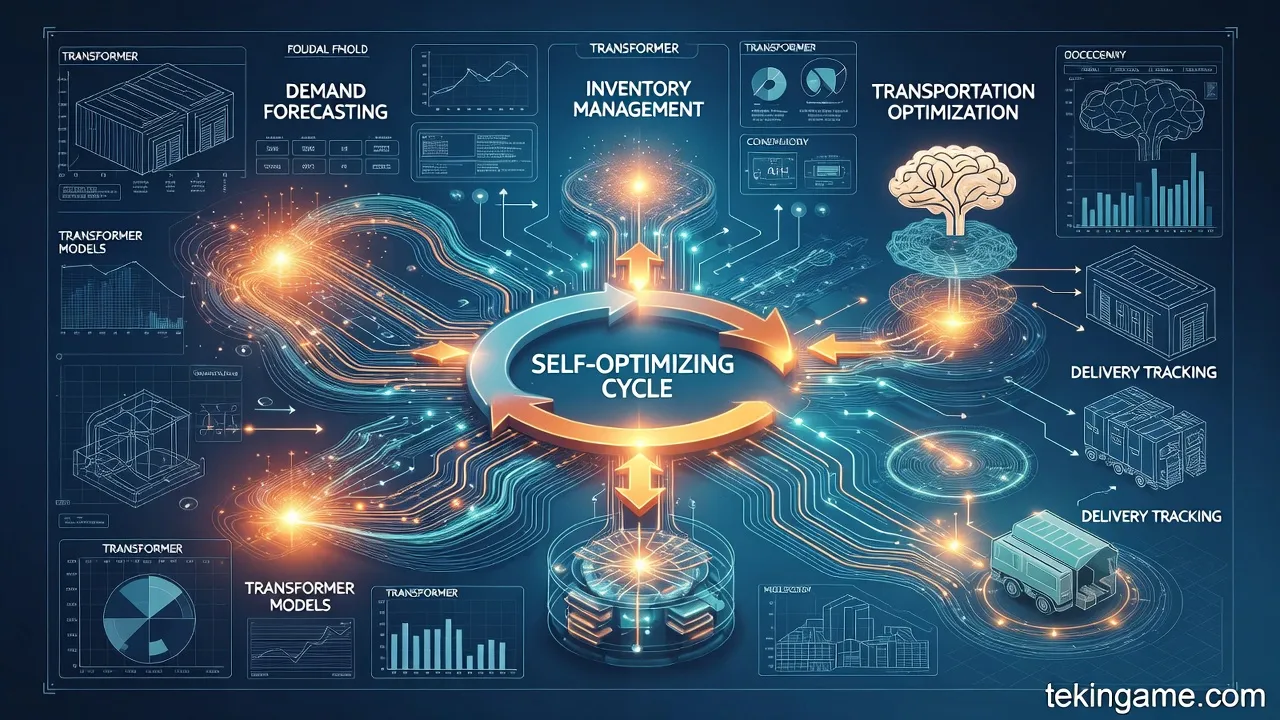

In tonight's Tekin Night report, we examine News 5: Amazon now operates 100 warehouses with end-to-end AI agents. This transformation converts the supply chain from a traditional linear system into an intelligent, self-optimizing cycle. We analyze through the tech-autopsy lens—combining engineering precision with analytical depth. We explore three key angles: economic impact, technical autopsy, and security and privacy implications.

Amazon, deploying over 1 million robots across its fulfillment network, has not only reduced costs by 25% during peak seasons but created a flywheel effect that paralyzes competitors. This cycle begins with demand forecasting: transformer-based models process billions of data points to predict demand accurately, leading to inventory optimization.

This system creates hyper-local warehouses: regional products are stored, shortening transport distances and reducing costs. The result? Faster delivery, lower operational costs, and higher profitability. Projections suggest that by 2033, 75% of fulfillment operations will be automated, shifting human labor toward supervisory roles.

Now to the technical heart: 100 warehouses with agentic AI agents managing operations from forecasting to final delivery. These systems are built on collaborative robotics. Kiva robots move shelves, Proteus (the first fully autonomous mobile robot) transports package carts to exit docks and safely interacts with humans in open spaces.

Robin, Cardinal, and Sparrow—the trio of AI robotic arms—sort, stack, and consolidate millions of items with computer precision. Sequoia, the massive storage system, coordinates thousands of mobile robots and robotic arms. Computer vision ensures precise sorting and reduces time by up to 75%.

Behind the scenes, Deep Fleet transforms the warehouse into a "machine": transformer models forecast demand, position inventory, schedule transportation, and guide last-mile delivery. These machine learning feedback loops make the system self-correcting.

This logistical utopia has dark shadows. End-to-end AI agents process billions of data points. A flaw in agentic models could paralyze the chain. Additionally, AI productivity tracking tools place workers under constant surveillance, leading to automated terminations and worsening working conditions.

Wellspring maps customers' precise locations, generating sensitive geolocation data. With 1 million robots, massive operational data is collected, which without end-to-end encryption, becomes a target for cyberattacks. These 100 warehouses represent not just transformation but a civilizational turning point. Amazon has redefined the supply chain, but the Tekin Army warns: without balance, this machine will consume humanity.

News 6: The Trust Crisis

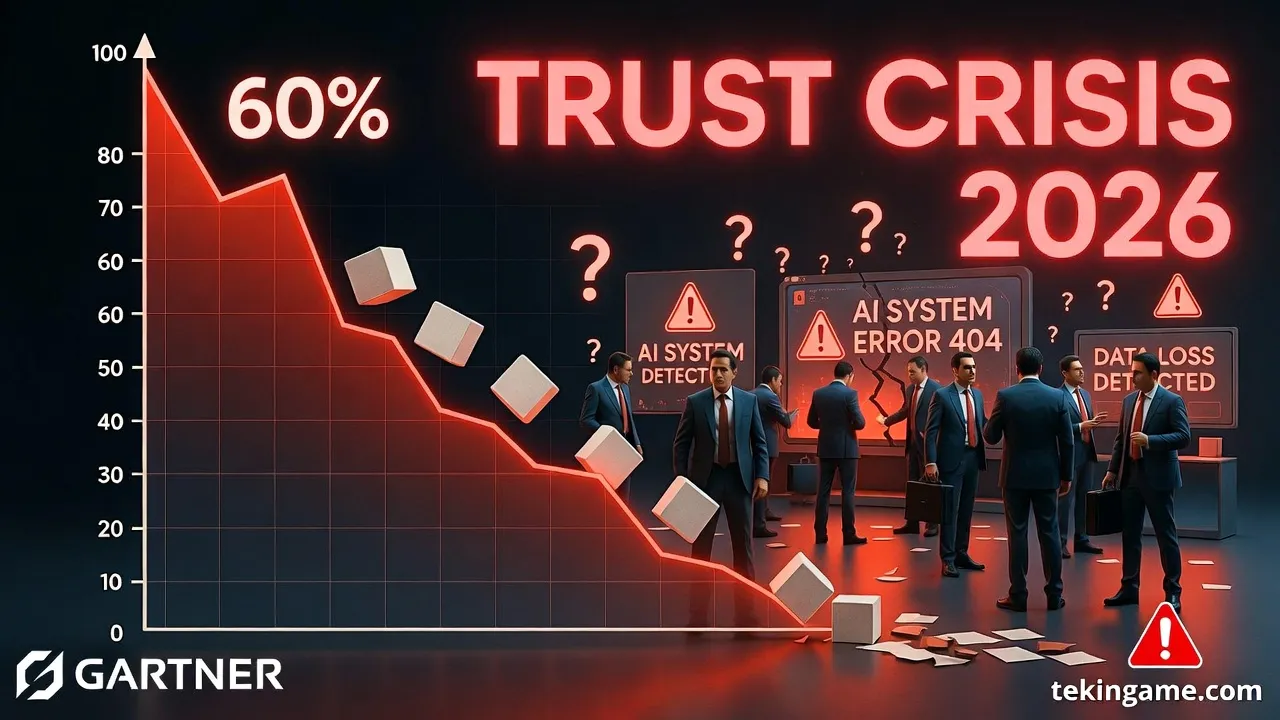

At the recent Gartner summit, a shocking statistic emerged: 60% of AI projects fail due to lack of human trust. This isn't just a simple statistic—it's an alarm bell for the future of human-AI collaboration. In this deep technical autopsy, we examine this crisis from three angles: devastating economic impact, technical autopsy of failure mechanisms, and security and privacy implications.

The trust crisis has shaken the tech economy. An MIT study shows that 95% of pilot AI projects in large companies have failed, resulting in a one trillion dollar drop in US tech stocks within four days. Gartner predicts that by 2025, 30% of AI projects will be abandoned, rising to over half by 2026.

Economically, the root lies in improper budget allocation. Companies tend to allocate budgets to exciting projects rather than foundational infrastructure like employee training and data governance. The RAND Institute presents a horrifying statistic: 80% of AI projects fail, double the failure rate of typical IT projects.

Now to the technical autopsy: why do 60% of projects fail due to lack of human trust? Gartner identifies weak data governance as the primary cause. AI projects require clean, observable, and authorized data, but often encounter messy data.

Failure mechanisms include: messy data (lack of governance leads to data unreadiness for AI), poor prompting (untrained managers and staff create ineffective prompts), lack of organizational integration (projects don't leave the central lab), and Shadow AI (informal use without oversight).

Lack of trust opens the door to security threats. Gartner warns: without data governance, AI projects lead to Shadow AI phenomena, where informal tools are used without oversight and data leak risks increase. AI security requires zero-trust architecture: every data point must be authorized, every model audited.

In conclusion, the trust crisis is not AI's end but an awakening. The Tekin Army warns: without governance, training, and integration, 60% of projects will be buried. The future of human-AI collaboration depends on rebuilding this trust.